ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- High latency from statistics system show

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

We just set up a four-node MCC, when we use the command:

I19NF8060::> statistics system show -interval 5 -iterations 25 -max 25

We get following results:

I19NF8060 : 12/3/2015 15:57:52

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

cluster 2 11 20316 340000

I19NF8060 : 12/3/2015 15:57:56

cluster 2 0 8192 500000

I19NF8060 : 12/3/2015 15:58:00

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

cluster 2 1 11392 600000

I19NF8060 : 12/3/2015 15:58:05

cluster 2 9 16252 404000

I19NF8060 : 12/3/2015 15:58:09

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

cluster 2 0 8192 0

I19NF8060 : 12/3/2015 15:58:13

cluster 2 11 20316 445000

The latency is too high for a new and even empty system (just have a SVM for some testing).

Do you have any ideas of how to find the reason about such high latency?

Best Regards,

Jeff Cai

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jeff,

what is it exactly that you are trying to show? My first guess is you are looking at the wrong output or using the wrong command.

If I take the demo MCC in Munich, which has just 5m distance between the two "sites", I see very similar numbers with that command:

optimus::> statistics system show -interval 5 -iterations 25 -max 25

optimus : 12/4/2015 11:08:01

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ----- -------

cluster 5 3 7017 250000

optimus : 12/4/2015 11:08:05

cluster 7 3 2664 142000

optimus : 12/4/2015 11:08:09

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ----- -------

cluster 5 2 3945 272000

optimus : 12/4/2015 11:08:13

cluster 2 1 959 400000

optimus : 12/4/2015 11:08:17

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ----- -------

cluster 4 3 1127 538000

optimus::>

Even if I put load on the system (VM running in NFS datastore) the values basically don't look any better:

optimus::> statistics system show -interval 5 -iterations 25 -max 25

optimus : 12/4/2015 11:10:42

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ------- -------

cluster 6 1737 9089108 5020000

optimus : 12/4/2015 11:10:46

cluster 4 1743 8754472 5008000

optimus : 12/4/2015 11:10:50

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ------- -------

cluster 6 1736 8726654 5028000

optimus : 12/4/2015 11:10:54

cluster 5 1746 9228116 4998000

optimus : 12/4/2015 11:10:58

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ------- -------

cluster 5 1746 9296850 5010000

optimus : 12/4/2015 11:11:02

cluster 7 1681 7337343 5173000

optimus::>

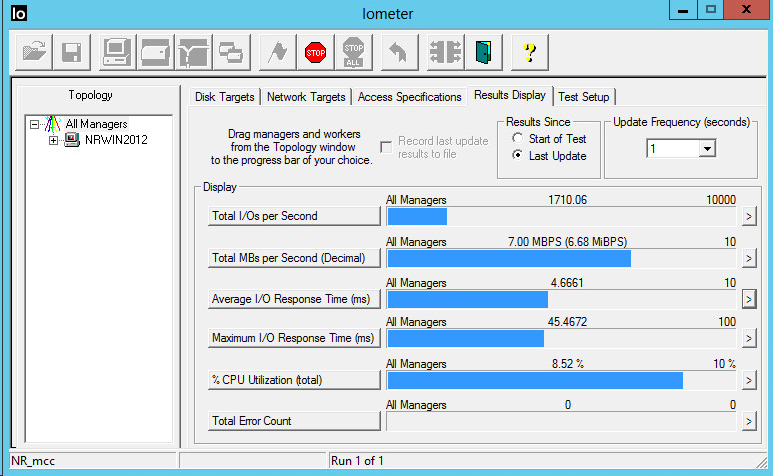

But the reality is, that the VM I'm testing with is doing IO on a mixed 100% random 50% read/write workload at 4.5ms latency and 100% write at 0.5ms latency.

This matches the IO_meter output as well as the ONTAP volume statistics:

optimus::> statistics volume show -interval 5 -iterations 10 -max 25

optimus : 12/4/2015 11:13:06

*Total Read Write Other Read Write Latency

Volume Vserver Ops Ops Ops Ops (Bps) (Bps) (us)

----------------------- -------------------- ------ ---- ----- ----- ------- ------- -------

svm_optimus_esx_demo_ds svm_optimus_esx_demo 1744 869 874 0 3561728 3570216 4361

[...truncated...]

So I assume the command you are using is not showing what you are looking for.

Kind regards, Niels

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

You can check the ASUP logs or run the perfstat tool for additional information. Also refer the following kb "https://kb.netapp.com/support/index?page=content&id=2023701&actp=LIST_RECENT&viewlocale=en_US&searchid=1449202331051"

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jeff,

what is it exactly that you are trying to show? My first guess is you are looking at the wrong output or using the wrong command.

If I take the demo MCC in Munich, which has just 5m distance between the two "sites", I see very similar numbers with that command:

optimus::> statistics system show -interval 5 -iterations 25 -max 25

optimus : 12/4/2015 11:08:01

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ----- -------

cluster 5 3 7017 250000

optimus : 12/4/2015 11:08:05

cluster 7 3 2664 142000

optimus : 12/4/2015 11:08:09

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ----- -------

cluster 5 2 3945 272000

optimus : 12/4/2015 11:08:13

cluster 2 1 959 400000

optimus : 12/4/2015 11:08:17

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ----- -------

cluster 4 3 1127 538000

optimus::>

Even if I put load on the system (VM running in NFS datastore) the values basically don't look any better:

optimus::> statistics system show -interval 5 -iterations 25 -max 25

optimus : 12/4/2015 11:10:42

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ------- -------

cluster 6 1737 9089108 5020000

optimus : 12/4/2015 11:10:46

cluster 4 1743 8754472 5008000

optimus : 12/4/2015 11:10:50

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ------- -------

cluster 6 1736 8726654 5028000

optimus : 12/4/2015 11:10:54

cluster 5 1746 9228116 4998000

optimus : 12/4/2015 11:10:58

CPU *Total Total Latency

System (%) Ops (Bps) (ms)

------- --- ------ ------- -------

cluster 5 1746 9296850 5010000

optimus : 12/4/2015 11:11:02

cluster 7 1681 7337343 5173000

optimus::>

But the reality is, that the VM I'm testing with is doing IO on a mixed 100% random 50% read/write workload at 4.5ms latency and 100% write at 0.5ms latency.

This matches the IO_meter output as well as the ONTAP volume statistics:

optimus::> statistics volume show -interval 5 -iterations 10 -max 25

optimus : 12/4/2015 11:13:06

*Total Read Write Other Read Write Latency

Volume Vserver Ops Ops Ops Ops (Bps) (Bps) (us)

----------------------- -------------------- ------ ---- ----- ----- ------- ------- -------

svm_optimus_esx_demo_ds svm_optimus_esx_demo 1744 869 874 0 3561728 3570216 4361

[...truncated...]

So I assume the command you are using is not showing what you are looking for.

Kind regards, Niels

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Niels,

Much appreciate your help.

Maybe it's a bug for the result?

Thanks,

Jeff