ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- MetroCluster & NVRAM Interconnnect

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Community Members,

Firstly thank you for viewing my post.

This is a question regarding MetroCluster behaviour.

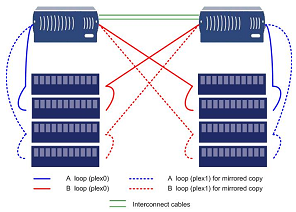

We are in the process of acquiring a set of 3160 in MetroCluster/Syncmirror, with 2 Filers (3160a, 3160b) and 4 shelves (a-shelf0-pri, a-shelf0-sec, b-shelf0-pri, b-shelf0-sec).

This configuration has Shelf redundancy as well as Filer redundancy.

I understand that in the event a full site failure has occured, for example, node A (Left) manual intervention is required to force takeover on B. This is when all communication from B has failed with A, including loss of B to b-shelf0-sec and a-shelf0-pri.

Automated failover happens from A to B when HW failure occurs, i.e. NVRAM Card failure.

But what will happen when I cut or disconnect the interconnect cables only? How does A & B detect that takeover is not required and the loss in connection is not due to NVRAM Card fault but instead a connectivity fault?

Also, how does in example if A NVRAM card fails, how does B know that he should take over and loss of connection is not due to connectivity fault but instead a "real" HW fault?

I tried sourcing for information in regards to this and found the below:

Message was edited by: shawn.lua.cw for readability.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Shawn,,

I hope that the details below will help you understand what hapens in certain failures.

Also if both heads leave the cluster for what ever reason, when they rejoin they will sync without data loss. This would be typically what you would call "Cluster No Brain". Neither head knows what is running. But all the data will be synced as you have the disk paths available and data is synced down this path.

We have 6 metro clusters and in all of our testing we have only had to do a hard take over where VMware was invloved.

regards

ArthursC

please see the table below taken from NetApp

| Event | Does this trigger a failover? | Does the event prevent a future failover occurring or a failover occurring successfully? | Is data still available on the affected volume after the event? |

|---|---|---|---|

| Single disk failure | No | No | Yes |

| Double disk failure (2 disk fail in the same RAID group) | No | No | Yes, with no failover necessary |

| Triple disk failure (3 disk fail in the same RAID group) | No | No | Yes, with no failover necessary |

| Single HBA (initiator) failure, Loop A | No | No | Yes, with no failover necessary |

| Single HBA (initiator) failure, Loop B | No | No | Yes, with no failover necessary |

| Single HBA (initiator) failure, Both loops at sametime | No | No | Yes, with no failover necessary |

| ESH 4 or AT-FCX Module failure on Loop A | No | No | Yes, with no failover necessary |

| ESH 4 or AT-FCX Module failure on Loop B | No | No | Yes, with no failover necessary |

| Shelf (Backplain) failure | No | No | Yes, with no failover necessary |

| Shelf, single power failure | No | No | Yes, with no failover necessary |

| Shelf, Dual power failure | No | No | Yes, with no failover necessary |

| Contoller (Head/Toaster), Single power failure | No | No | Yes, with no failover necessary |

| Contoller (Head/Toaster), Dual power failure | Yes | Yes, until power is restored | Yes, if failover succeeds CLUSTER FAILOVER PROCEDURES STEPS TO BE FOLLOWED IN THIS EVENT |

| Total loss of power to IT HALL 1. IT HALL 2 not affected (This simulates a Contoller (Head/Toaster), Dual power failure) | Yes | Yes, until power is restored | Yes, if failover succeeds CLUSTER FAILOVER PROCEDURES STEPS TO BE FOLLOWED IN THIS EVENT |

| Total loss of power to IT HALL 2. IT HALL 1 not affected (This simulates a Contoller (Head/Toaster), Dual power failure) | Yes | Yes, until power is restored | Yes, if failover succeeds CLUSTER FAILOVER PROCEDURES STEPS TO BE FOLLOWED IN THIS EVENT |

| Total loss of power to BOTH IT HALL 1 & IT HALL 2 (NO OPERATIONAL SERVICE AVAILABLE UNTIL POWER RESTORED) | Yes | Yes, until power is restored | Yes, if failover succeeds DISASTER RECOVERY - CLUSTER FAILOVER PROCEDURES STEPS TO BE FOLLOWED IN THIS EVENT |

| Cluster interconnect (Fibre connecting Head/Toaster from primary to partner)failure (port 1) | No | No | Yes |

| Cluster interconnect (Fibre connecting Head/Toaster from primary to partner)failure (both ports) | No | No | Yes |

| Ethernet interface failure (primary, no VIF) | Yes, if setup to do so | No | Yes |

| Ethernet interface failure (primary, VIF) | Yes, if setup to do so | No | Yes |

| Ethernet interface failure (secondary, VIF) | Yes, if setup to do so | No | Yes |

| Ethernet interface failure (VIF, all ports) | Yes, if setup to do so | No | Yes |

| Head exceeds permissable amount | Yes | No | No DISASTER RECOVERY - CLUSTER FAILOVER PROCEDURES STEPS TO BE FOLLOWED IN THIS EVENT |

| Fan failures (disk shelves or controller) | No | No | Yes |

| Head reboot | Yes | No | Maybe. depends on root cause of reboot CLUSTER FAILOVER PROCEDURES STEPS TO BE FOLLOWED IN THIS EVENT |

| Head Panic | Yes | No | Maybe. depends on root cause of reboot CLUSTER FAILOVER PROCEDURES STEPS TO BE FOLLOWED IN THIS EVENT |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear all,

Thank you for your replies, they have been most helpful.

In regards to the mailbox disks, I have also spoken to a few NetApp SEs regarding this question and they have mostly given different replies.

Summary of what I was able to gather:

Mailbox disks:

These disks reside on both the filers when they are clustered. The interconnect will transmit data (etc etc, time stamp and such) to these mailbox disks, each filer's mailbox disk will contain the timestamp of his own as well as his partner's timestamp, when the interconnect is cut, the 2 filers will still be able to access to the mailbox disks owned by themselves as well as its partner's but unable to stamp new information to its partner's mailbox.

This way, they will both be able to read each other's mailbox disks and know that the partner is still alive due to the partner still being able to stamp info on its own mailbox disk.

When the controller has a failure (one of those events triggered in the table) they will stop stamping on its own mailbox therefore when its partner reads its mailbox disk, the partner takes over.

The above is version (A) after from SE A.

For version B, they tell me that when a hw failure happens on a controller, it will relinquish control over his own quorum of disks, therefore allowing the other node to take over.

Again thank you all for your kind replies.

Best Regards,

Shawn Lua.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Shawn. I have the same question.

The arthursc0's table helps a bit but it is not the answer.

Did you get the answer to your question?

I find out similar table "Failover event cause-and-effect table" for "Data ONTAP 7.3 Active/Active Configuration Guide" and more resent "Data ONTAP® 8.0 7-Mode High-Availability Configuration Guide" as arthursc0 shows but more detailed.

The problem is: the tables not explains "how it works".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It would be helpful if you ask your question explicitly or at least explain what you think is missing in answers in this thread. As far as I can tell, answers in this thread are as complete as you can get without reading source code.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The arthursc0's table not explains "how it works" it says "what will happen".

Shawn.lua.cw in his last post Jun 25, 2010 12:17 AM has two versions how NetApp FAS/V handle interconnect failure. They are still versions and not approved facts.

I want to know as Shawn - the NetApp FAS/V behavior in situation when interconnect falls and why it behaves so. It is important to know the concept "how it works", the logic of it's behavior not just "what will happen" in some situations.

And second important thing is: to receive confirmation of one of the versions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Both “versions” say exactly the same - when controller fails it cannot compete for mailbox access which is indication for partner to initiate takeover. Whether one individual calls it “stop writing” and another “relinquishes access” is matter of “language skills” ☺

When interconnect fails NetApp is using mailbox disks to verify that partner is still alive. If it is - nothing happens and both partners continue to run as before. If mailbox indicates that partner is not available - takeover is initiated. If this does not qualify as “NetApp behavior” - please explain what you want to know.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Where can I reed more about "mailbox disks"?

Thanks for the help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am not sure, really … does search on support site show anything?

In normal case there is nothing user needs to configure or adjust. NetApp handles setup of those disks (including cases when mailbox disk becomes unavailable) fully automatically. In those rare cases when user needs to be aware (like head swap) necessary steps are documented.

Troubleshooting training may cover them in more details, but I have not attended it myself.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I find out a bit about mailbox disks here. And in Russian at blog.aboutnetapp.ru.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have questions about NetApp with broken interconnect:

What will happen (interconnect is broken) when one of controller will die?

Are controllers checking periodically (or only once) for partner's disks availability?

If yes, then it will take over died partner's disks, but what will happen with data in NVRAM?

How dangerous such a situation is for data consistency at died partner's disks?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If interconnect is broken because of controller failure, partner will take over.

If interconnect fails first and controller fails later, takeover does not happen, because NVRAM cannot be synchronized so there is no way for a partner to replay it.

As soon as interconnect fails you will see message “takeover disabled”.