November 2014

As the NetApp® clustered Data ONTAP® storage operating system continues to gain momentum—with over 1.9 exabytes of storage and almost 24,000 controllers in production—it’s proving to be extremely effective for both enterprise IT and the cloud. Storage admins really appreciate the ability to nondisruptively move workloads as needed—including between all-flash nodes and hybrid storage. NetApp continues to build out new features to enhance overall performance, extend nondisruptive operations capabilities, and improve efficiency and manageability.

This article explores the new features of clustered Data ONTAP 8.3, which was launched at NetApp Insight on October 28, 2014. Its feature set is broadly applicable to enterprise, private cloud, and cloud service provider deployments. A separate article in this issue of Tech OnTap describes the capabilities of Cloud ONTAP, which brings the enterprise capabilities of clustered Data ONTAP to the public cloud. Clustered Data ONTAP 8.3 and Cloud ONTAP are two key elements in NetApp’s vision of a NetApp Data Fabric that simplifies data management and data mobility across clouds of all types.

Clustered Data ONTAP 8.3 is the first Data ONTAP release to support clustered operation only. (For 7-mode customers, NetApp is committed to continue providing 7-mode support on 8.2.x.) Clustered Data ONTAP includes a huge number of enhancements and features that up the ante—further improving your ability to store, serve, and manage data.

In particular, this article will look at the following new capabilities:

- Performance

- Read-path optimizations that dramatically increase all-flash FAS read performance for systems under load

- Cache size increases up to 4X for hybrid storage configurations using Flash Pool™ intelligent caching

- Efficiency and Management

- Advanced drive partitioning to increase usable capacity for entry systems, all-flash FAS, and Flash Pool

- IPspaces, to allow separate storage virtual machines (SVMs) in the same cluster to have overlapping subnets and IP addresses

- NDO and Availability

- MetroCluster™ software for clustered Data ONTAP

- Enhancements to SnapMirror® and SnapVault® software

- Automated, nondisruptive upgrades (NDU)

- Data Motion™ for LUNs software

- Transition from 7-Mode

- 7-Mode Transition Tool (7MTT) with SAN migration and MetroCluster support

A more complete (but still not exhaustive) list of 8.3 features is included in Table 1.

New Features in 8.3

![]()

Table 1) New features of clustered Data ONTAP 8.3 (Features in bold are discussed in later sections.)

| Feature | Advantage |

| Performance and Scalability | |

| Read-path optimization |

|

| Increased cache size |

|

| Inline zero write detection |

|

| SMB/CIFS improvements |

|

| Replication performance |

|

| Expanded SAN limits |

|

| Efficiency and Management | |

| Advanced drive partitioning |

Delivers more usable capacity and enhances Flash Pool flexibility. Three uses cases:

|

| IPspaces |

|

| System Setup 3.0 |

|

| System Manager 8.3 |

|

| VVOL support |

|

| FlexClone® for SVI |

|

| Networking enhancements |

|

| NDO and Availability | |

| MetroCluster |

|

| SnapMirror and SnapVault Enhancements |

|

| Automated NDU |

|

| DataMotion for LUNs |

|

| SMTape |

|

| Transition Tools | |

| 7-Mode Transition Tool 2.0 |

|

| Foreign LUN import |

|

| Rapid Data Migration Tool |

|

| Other | |

| NFS enhancements |

|

| Selective LUN mapping |

|

| IPv6 enhancements |

|

Performance and Scalability

![]()

Although Data ONTAP has been around for more than 20 years, NetApp engineers continue to find ways to increase performance and scalability—delivering more value from your existing hardware.

All-Flash FAS Performance

In the recent Tech OnTap article, All-Flash FAS: A Deep Dive, the authors hinted that “upcoming enhancements” would significantly boost random, small-block read performance—which is a good proxy for OLTP performance. With 8.3, those enhancements have come to pass.

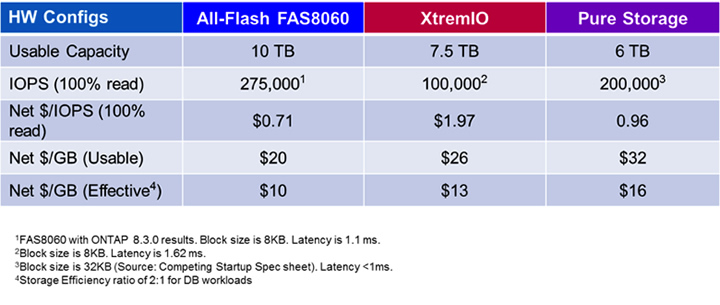

NetApp engineers examined the read path from end to end to identify and eliminate overhead; the result is a massive increase in read performance versus Data ONTAP 8.2. If you compare the numbers in Figure 1 to those in the earlier article mentioned above, maximum FAS8060 performance has improved by 35% and maximum FAS8080 EX performance has improved by a whopping 64% for random read operations.

Think for a moment about what this means. Upgrading an all-flash FAS system from 8.2.x to 8.3 can deliver a performance improvement of up to almost 70% with no hardware changes—and all-flash FAS performance was already highly competitive before 8.3.

Figure 1) All-flash FAS performance with Data ONTAP 8.3 relative to public numbers from several competitors.

This increase in performance, combined with an increase in usable capacity (more on that in the Advanced Drive Partitioning section), translates to improvements in both $/IOPS and $/GB, as shown in Table 2.

Table 2) Comparison of all-flash FAS8060 with competitors for database workloads.

While these read-path optimizations have the most dramatic effect for all-flash configurations, hybrid and HDD-only systems will also benefit.

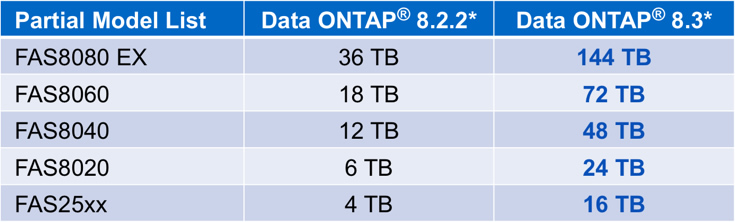

Cache Size Increases by Up To 4X

You may have noticed that in recent releases NetApp has been steadily increasing the total amount of cache supported for hybrid storage configurations that use NetApp Flash Cache™ and/or Flash Pool software. That trend continues in 8.3 with a 4X increase for most platforms, as shown in Table 3. Our goal is to make sure you never have to worry about hitting a cache “ceiling” that would limit your ability to scale a FAS system or cluster.

Table 3) Supported maximum hybrid flash per HA pair in 8.3 versus 8.2.2. (FAS2200, FAS3200, and FAS6200 also see an increase.)

In all FAS models, the maximum amount of Flash Cache supported is limited by the number of available PCIe slots. The Flash Cache limit for the FAS8080 EX has been increased to 24TB per HA Pair (from 16TB); Flash Cache limits for other FAS models remain the same.

Additional Flash Pool enhancements include:

- Overwrites of all sizes are now cacheable when Flash Pool is configured to receive overwrite operations. (The 16kb cutoff has been removed.)

- When Flash Pool is configured to receive overwrites, the cache capacity reserve has been reduced, providing a 13% increase in available cache capacity.

Efficiency and Management

![]()

A number of new features contribute to the overall efficiency and manageability of clustered Data ONTAP.

Advanced Drive Partitioning

As the name implies, Advanced Drive Partitioning segments physical drives into multiple partitions. This technology is advanced in the sense that a single physical drive is shared by multiple aggregates—and can be accessed by two different controllers at the same time.

There are three use cases that are supported with advanced drive partitioning:

- Root-data SSD partitioning for all-flash FAS

- Root-data HDD partitioning for entry FAS systems

- SSD partitioning for Flash Pool

All three use cases have a few things in common that you should keep in mind:

- Partition sizes are defined by the system and are not user configurable.

- You cannot convert from an unpartitioned configuration to a partitioned configuration with data in place. (In cluster configurations with four or more nodes, you can nondisruptively evacuate a storage system using vol move and implement partitioning without taking data offline.) Note that storage systems running 8.3 don’t have to be partitioned. You can do an in-place upgrade of existing systems and get all the other benefits of 8.3.

Root-data partitioning use cases. The first two use cases are quite similar. With clustered Data ONTAP, data aggregates are taken over and given back serially. As a result, aggregates that contain root volumes are separate from aggregates containing user data. The goal with root-data partitioning is to free the size of the root volume from the constraints imposed by physical media to increase usable capacity.

This is achieved by logically dividing each drive into two partitions to form separate root and data partitions. Initiators on both storage controllers can access the same drives concurrently. Each storage controller knows the block ranges on each physical drive it is allowed to access, so data integrity is maintained.

Advanced drive partitioning improves usable capacity compared to configurations with dedicated root aggregates. For a 24-drive configuration, usable capacity increases by 20% or more depending on the size of the drives. This also decreases the storage overhead and cost associated with using RAID-DP® for the root aggregate.

Figure 2) Example of root-data partitioning for a 24-drive active/active all-flash FAS or entry FAS system. Partition size and layout is determined by the system and not user configurable.

Here are a few important items to take note of:

- All new entry systems (FAS2200 and FAS2500) and all new all-flash FAS systems shipping with Data ONTAP 8.3 will be partitioned by default when they ship from the factory.

- Root-data partitioning is not supported on FAS8000 systems with HDD root aggregates in Data ONTAP 8.3.

Flash Pool partitioning. Advanced drive partitioning for Flash Pools is a little different than root-data partitioning. The goal for this use case is to share a set of SSDs across multiple Flash Pool aggregates to reduce the overhead due to parity and spares, and to increase flexibility.

Advanced drive partitioning for Flash Pool segments each drive into four pieces rather than two. The left side of the illustration in Figure 3 shows a Flash Pool configuration without partitioning. The two SSD storage pools shown each utilize a single RAID-4 RAID group with two data drives and one parity drive, so two drives are consumed for parity. The right side of the diagram shows the configuration with advanced drive partitioning. The same six drives are used to create four RAID-4 RAID groups, each of which spans all six drives—consuming a single drive for parity. In this example, the overhead due to parity is reduced from 33% to 16.5%. (Naturally, the bigger the RAID group, the lower the overhead.)

Figure 3) When used for Flash Pool, advanced drive partitioning reduces the overhead associated with parity and spares, thereby providing more usable capacity.

Here are a few important points to note:

- A single partitioned SSD storage pool can be shared by up to four Flash Pool aggregates.

- Only the SSDs in the Flash Pool aggregate are partitioned, not the HDDs.

- A data aggregate consisting entirely of SSDs cannot be partitioned.

IPspaces

IPspaces reduce the complexity of tenant administration. The network multi-tenancy provided by IPspaces is equivalent to that provided by 7-Mode systems running NetApp MultiStore® software with vFiler® units. If you’re currently running MultiStore, Data ONTAP 8.3 with IPspaces is transition ready.

IPspaces allow storage virtual machines (SVMs) in the same cluster to have overlapping subnets and IP addresses. A single IPspace can contain one or multiple SVMs according to your needs. A cluster can have a separate IPspace per SVM if needed, or all the SVMs in a cluster can exist in the “default” IPspace if you don’t need to accommodate overlapping address spaces.

Figure 4) IPspaces allow different SVMs to utilize overlapping address spaces. Every SVM can have its own IPspace or multiple SVMs can share an IPspace. In this example the IP addresses 10.98.7.1 and 10.98.7.2 are used in both IPspaces.

Common use cases include:

- Service provider environments where you can’t control customer subnet assignments

- Mergers and acquisitions where pre-existing subnet assignments overlap

- Migration from 7-mode MultiStore environments

NDO and Availability

![]()

With clustered Data ONTAP 8.3, NetApp continues to set the standard for nondisruptive operations and availability features.

MetroCluster

Without question, MetroCluster is one of the biggest payload items for 8.3. MetroCluster is the NetApp solution for continuous data availability. It provides synchronous mirroring between sites up to 200km apart. If you’re not familiar with MetroCluster, it offers:

- Architected for zero data loss – Never lose a transaction, because writes are immediately committed to both sites.

- Simplicity – No external devices or host-based configuration.

- Zero change management – Once it’s set up, configuration changes made on one side are automatically replicated to the other side.

- 50% lower cost and complexity versus other solutions – This includes lower software acquisition cost and cost of ownership of the solution due to its easy-to-manage architecture. There are no external devices, capacity-based licenses, or ongoing configuration management.

- Seamless integration with storage efficiency, backup, DR, NDO, FlexArray – All are built-in to Data ONTAP.

- Support for both SAN and NAS – simultaneously. Most competitive solutions support only SAN.

- Free MetroCluster software – It’s part of Data ONTAP, with no separate licenses required.

In clustered Data ONTAP 8.3, MetroCluster utilizes two separate ONTAP clusters with a two-node cluster in each location. Clients are served from all four nodes during normal operation. Local HA is used for all local failures. This is a significant difference from 7-mode MetroCluster, in which a local failure could trigger failover to the alternate site, which is not always desirable.

Figure 5) Clustered Data ONTAP 8.3 adds support for MetroCluster. Two separate two-node clusters provide both local and remote failover depending on the type of outage.

SnapMirror and SnapVault Enhancements

SnapMirror and SnapVault have been the workhorses of the NetApp integrated data protection portfolio for years. SnapMirror is consistently ranked as the #1 or #2 replication solution, and NetApp invests significant time and effort to make sure both tools continue to lead the market with the clear recognition that intelligent, efficient, and easy-to-use replication is a key enabling technology for hybrid cloud. Several of the latest enhancements were designed with this in mind.

Single replication stream for SnapMirror and SnapVault. This enhancement lets you satisfy both your disaster recovery and backup requirements while:

- Reducing network traffic by 50%

- Reducing secondary storage requirements by 40%

Native network compression. This enhancement further reduces bandwidth requirements by up to 70%, and can eliminate the need for separate WAN optimization hardware. (Make sure you have available CPU headroom before enabling this feature.)

Failover to prior point-in-time Snapshot copy. SnapMirror on clustered Data ONTAP 8.3 can help you recover quickly to a previous point in time by leveraging the vaulted Snapshot copies in the unified mirror/vault repository. Because the SnapMirror destination can retain additional Snapshot copies, you no longer need to maintain separate Snapshot copies for disaster recovery and backup at the secondary site.

Version-flexible replication. This new feature enhances nondisruptive operations and simplifies upgrades. In the past you needed to have the same version of clustered Data ONTAP at both the primary and secondary sites, but some of you have hundreds of SnapMirror relationships around the world and needed a way to upgrade SnapMirror at your own pace.

Starting with clustered Data ONTAP 8.3 (this will not work with a source or target running clustered Data ONTAP 8.2.x), you can have different versions of SnapMirror at the primary and secondary locations and upgrade them nondisruptively. We call this new capability SnapMirror version-flexible replication.

This not only simplifies the upgrade process, it supports bidirectional replication. In the past, bidirectional systems each had to be running on identical software revision levels. This typically required a short period of downtime to upgrade the software on both sides of a bidirectional SnapMirror to the same level. Starting with Data ONTAP 8.3, identical versions no longer need to be in place for replication, enabling nondisruptive upgrades not only for bidirectional SnapMirror but also for complex, multihop topologies.

Additional enhancements include:

- Ability to cache SnapMirror and SnapVault destination volumes in Flash Pool. Flash Pool aggregates can now cache read-only volumes.

- Disaster recovery for FlexClone. Previously, you had to replicate the entire volume from which a FlexClone volume was cloned. Now you only need to replicate the specific FlexClone volume.

- Increased Fan-in and Fan-out.

- Fan-in up to 255:1

- Fan-out up to 1:16

Automated Nondisruptive Upgrade (NDU)

Clustered Data ONTAP 8.3 supports automated, nondisruptive software upgrades. Three commands are all that is needed to bring the Data ONTAP package (obtained from support.netapp.com) into the cluster, do validation to make sure the cluster is prepared for the upgrade, and then perform the upgrade. All downloads, takeovers, and givebacks are performed as part of the automated process. Automated NDU:

- Simplifies your operations

- Saves time by reducing the number of manual commands by 10x

- Reduces the chance of user error

- Frees up time that would otherwise be spent planning the upgrade for more strategic projects

Note that automated NDU is a feature of Data ONTAP 8.3. Once you upgrade to 8.3, you can use it for all future upgrades.

DataMotion for LUNs

DataMotion for LUNs allows you to move a LUN nondisruptively from one cluster volume to another. If you are familiar with DataMotion for Volumes or vol move—which is one of the most appreciated features of clustered Data ONTAP—DataMotion for LUNs is conceptually similar. However, it uses a new engine inside clustered Data ONTAP designed for moving and copying data objects. (It’s also used for moving VVOLs.)

What makes this new engine powerful is that it provides instantaneous cutover. Immediately after a request is made to move a LUN, that LUN becomes available on the destination node. Writes go to the destination node, while reads are pulled across the cluster interconnect from the source. This means load on the source node is immediately reduced because it is not processing writes.

Two important points to note:

- Deduplication efficiency is lost when a LUN is moved until the deduplication scanner is run on the destination node.

- Snapshots of the LUN remain on the source volume.

Updated Transition Tool

![]()

If you’re excited about all the capabilities of clustered Data ONTAP 8.3, you’ll be happy to know that we’ll also be releasing 7-mode Transition Tool version 2.0 to simplify migration from 7-Mode.

You may have read about 7MTT in the recent Tech OnTap article, How to Move from 7-Mode to Clustered Data ONTAP. There’s still a lot of valuable information in this article, but keep an eye out for an update on the entire transition process from the same authors in the next few issues.

This version includes all of the capabilities found in previous versions of the tool as well as a variety of new capabilities. The most important new feature of 7MTT version 2.0 is the ability to transition SAN environments. Previous versions of the tool supported only NAS protocols. Version 2.0 also supports migration of 7-Mode MetroCluster to a clustered Data ONTAP MetroCluster environment.

Getting Started

![]()

Because of its many new features including advanced drive partitioning, MetroCluster, and IPspaces, plus increased performance and other enhancements, we expect Data ONTAP 8.3 to be compelling both for existing clustered Data ONTAP users, and for those of you who have been waiting for the right time to make the move to clustered Data ONTAP.

There are two things to bear in mind before you think about upgrading:

- As mentioned in the introduction, Data ONTAP 8.3 supports only cluster operation; it does not support 7-Mode.

- Data ONTAP 8.3 supports only 64-bit aggregates. These provide larger aggregate and volume sizes and enable advanced features including compression, Flash Pool, and storage-efficient vaulting. More than 100,000 installed NetApp systems are already using 64-bit aggregates.

- In-place, nondisruptive expansion to 64-bit aggregates is supported in Data ONTAP 8.1.4P4 and 8.2.1 and higher. It does not require any additional drives.

- 32-bit aggregates must be converted before an upgrade to 8.3.

- 32-bit Snapshot copies must be deleted or aged out prior to upgrading to 8.3. (By definition the metadata is read-only and cannot be upgraded to 64-bits.)

- SnapMirror and SnapVault relationships also require 64-bit aggregates.

To prepare for Data ONTAP 8.3, you should:

- Upgrade to 8.2.1 or later and convert any remaining 32-bit aggregates to 64-bits as soon as possible.

- Age out or delete any 32-bit Snapshot copies before upgrading to 8.3.

(The snap list –fs-block-format command can be used to identify 32-bit Snapshots.)

![]()