VMware Solutions Discussions

- Home

- :

- Virtualization Environments

- :

- VMware Solutions Discussions

- :

- So confused about vSphere round-robin iSCSI Multipathing...

VMware Solutions Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

So confused about vSphere round-robin iSCSI Multipathing...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

Hope any of the gurus on this community can help clarify something for me...

Essentially I would like to know if it's possible to do vSphere iSCSI NMP with only 2 NIC's per FAS node and without stack capable switches (i.e no cross-switch etherchannel)?

Hypothetical Example:

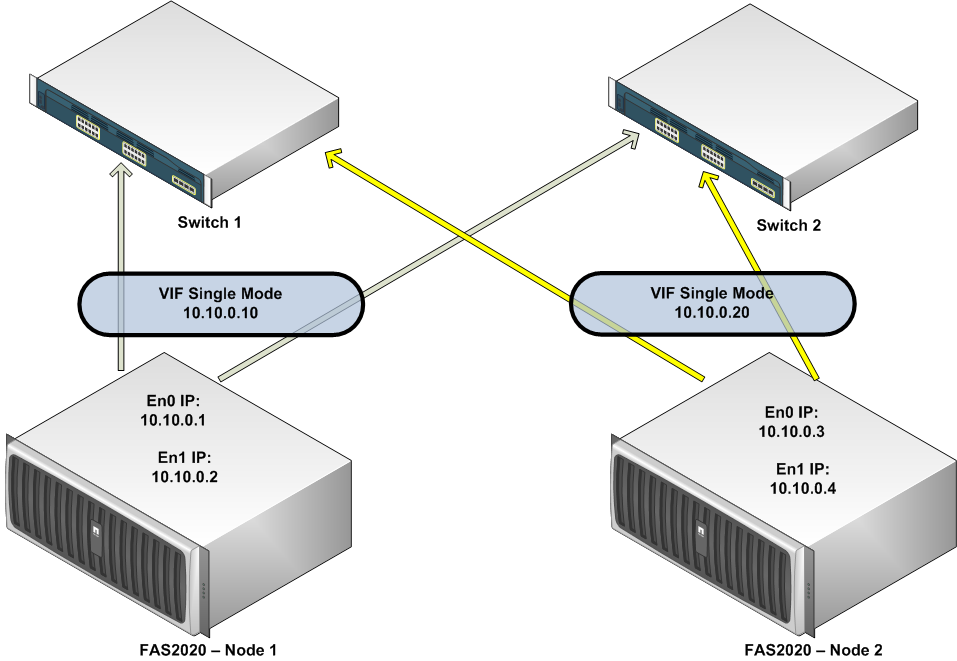

In this case the core switches don't support any kind of stacking at all, and therein lies the problem.

I know that because of this and if I want a minimum of safety (redundancy) I have to connect each of the 2 NIC's on the FAS node to the 2 Switches.

Example:

Now, I know that in this configuration I only have failover capacity between each NIC on the FAS nodes. But that’s just it, I would like to know if it’s possible using this setup to have multipathing iSCSI using round-robin NMP on vSphere.

Let’s say I configure 2 vmKernel NICS on one ESX vSwitch using IP’s from our example Lan (IP 10.10.0.6 and 10.10.0.7). Bearing in mind that the 2 NICs on the vSphere will be connected in a manner similar to the FAS nodes, one cable to each of the 2 switches.

Now, if I specify round-robin on the 2 vmKernel NIC’s and then use the VIF’s as iSCSI targets, will I get multi-pathing to each node? That is, will the vSphere NMP automatically balance the paths?

Or, do I have to create 1 VIF alias on each node (as suggested by the “vSphere SAN Configuration Guide”) and then use those 4 VIF’s as iSCSI targets?

Confused? You bet

Thanks in advance

Kind Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi & welcome to the forums!

As far as I know some form of cross-stack port aggregation on the switching side is essential - in Cisco world that would be cross-stack Etherchannel (3750G is the lowest model supporting this).

Without this you are looking at single active path I am afraid.

Regards,

Radek

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Radek,

Thanks for the answer. Actually I have Andy Banta over at Vmware communities saying that this is indeed possible...

I guess the main question is if a single mode VIF in fact allows for 2 active path's or if one of the NIC's is just a standby in this kind of configuration...

Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cross-stack port aggregation is required on the NetApp side - a vif needs to be fooled it is talking to the same physical switch in order to make multiple paths active.

It's nothing unusual from my experience with other iSCSI vendors (e.g. LHN has exactly the same requirement)

Regards,

Radek

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you plan to use iSCSI with vSphere I would recommend you enable support for multple TCP sessions. In this mode all links from the ESX/ESXi hosts will have path resilency handled by the RR PSP.

The storage side will be dictate by the capabilities of your switches. If your switch provides a means of 'Multi-Switch Link Aggregation' as like the Nexus Virtual Port Channels, the Catalyst 3750 Cross-Stack EtherChannel, or the Catalyst 6500 w/ VSS 1440 Multi-Chassis EtherChannel or wether you have traditional (aka 'dumb') Ethernet switches.

Once you have identified your switching capabilities you can implement EtherChannel (or VIFs) on the NetApp. I'd suggest LACP is your switch supports it. With 'Multi-Switch Link Aggregation'you create a single LACP from storage to multiple ports on the switches. With traditional Ethernet switches you will create Single Mod VIFs (or active / passive) links across the switches.

More info is available in TR-3749 (vSphere) and TR-3428 (VI3).

Note: we are in the middle of a major rewrite of R-3749 which will be avialable on January 26th, 2010 (as a part of our press release).

Cheers,

Vaughn Stewart

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Stewart,

Thanks for that.

This are standard switches.

If I create single mode vifs, it is my understanding that this will only allow a single active NIC on the FAS node, since in a FAS2020 I only have 2 NICs per node, this would make it impossible to have any kind of multi-pathing...

Do I have this right?

Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I need a bit more info...

If you are nly doing vSphere iSCSI on these arrays there is a simple soluiton. Enable multiple TCP sessions for iSCSI in your ESX/ESXi hosts. The NetApp links are just standard, non-EtherChanneled links (aka no VIFs).

The vSphere NMP will handle I/O load balancing and path resiliency via the RR PSP.

The key here is if you need access to the FAS by your public (non-storage) network. You will need to either add ports for management or other access, or allow your prod network to route into your storage network.

Cheers,

Vaughn

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have no problems in allowing routing between vlans (in modest deployments) we always do that.

So, if I get this right:

in the configuration your suggesting I would have two isolated NIC's on the FAS node, each with it's own IP in the same subnet as the one for the 2 VMkernels, and I would then use those 2 targets on the iSCSI vSphere initiator?

Or, do I need to configure two completely different subnets/IP in each NIC and also place each VMkernel accordingly?

But in that case, wouldn't I loose any kind of failover on each FAS node? I mean, if one of the FAS node NIC's fails, I would loose all access to that target IP since the surviving node NIC wouldn't serve the failed IP...

I guess that wouldn't be much of a problem since the vSphere RR would just redirect all the initiator requests to the surviving target IP/NIC...

But here's the catch, in the VMWARE "iSCSI San Configuration Guide" there's the following statement:

"The NetApp storage system only permits one connection for each target and each initiator. Attempts to make additional connections cause the first connection to drop. Therefore, a single HBA should not attempt to connect to multiple IP addresses associated with the same NetApp target."

Wouldn't this situation in case of failover qualify as a single HBA "trying to connect to multiple IP addresses associated with the same NetApp target"?

Cheers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"in the configuration your sugesting I would have two isolated NIC's on the FAS node, each with it's own IP in the same subnet as the one for the 2 VMkernels, and I would then use those 2 targets on the iSCSI vSphere initiator?"

-- YES --

But in that case, wouldn't I loose any kind of failover on each FAS node? I mean, if one of the FAS node NIC's fails, I would loose all access to that target IP since the surviving node NIC wouldn't serve the failed IP...

-- NO -- Controller failover is in ONTAP and each single link has a failover partner defined within the array. Path availability in ESX is handled by the Round Robin Path Selection Policy.

"But here's the catch, in the VMWARE "iSCSI San Configuration Guide" there's the following statement:

"The NetApp storage system only permits one connection for each target and each initiator. Attempts to make additional connections cause the first connection to drop. Therefore, a single HBA should not attempt to connect to multiple IP addresses associated with the same NetApp target.""

-- This doc needs clarification -- Each NetApp IP address is a target, and as such this is how we support Multi-TCP sessions with iSCSI.

See more at:

http://media.netapp.com/documents/tr-3749.pdf

Cheers,

Vaughn Stewart

NetApp & vExpert

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ho boy, I just realized who you are, this is indeed an honor Sir.

Many thanks Vaughn, this really clarifies a lot of my (wrong) preconceptions...

Alass, I don't fully understand one of your answers:

"-- NO -- Controller failover is in ONTAP and each single link has a failover partner defined within the array. (...)"

When you mention failover partner are you referring to the other node and it's capability to assume the failed node identity?

Or, are you refering to some internal mechanism of Ontap that allows a surviving NIC (even without VIF configuration) to assume the IP of the downed interface (i.e the IP of e0 is assured by e1)?

To be quite honest I find tr-3749 somewhat confusing, it has sections that are quite clear but others throw me off completely... For instance, whenever there are examples of multi-pathing without some sort of cross-switch etherchannel, the guide always suggests using different subnets on the FAS NIC's and has you have demonstrated on this thread, that isn't always the case...

Cheers and thank you again

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Vaughn,

It's really nice to see you around - your input into this thread is *much* appreciated!

I'd like to follow up on this bit:

If you are nly doing vSphere iSCSI on these arrays there is a simple soluiton. Enable multiple TCP sessions for iSCSI in your ESX/ESXi hosts. The NetApp links are just standard, non-EtherChanneled links (aka no VIFs).

Is it specific to vSphere? I mean my installation engineers were always moaning when implementing ESX 3.x, that a lack of cross-stack LACP leads to a single active path only - but apparently that's not the case in vSphere 4 (if I understand what you are saying correctly).

Also - how about NFS? Would that work as well? (I'd guess so, as VMkernel ports are handling the traffic as well, but just double-checking...)

Kindest regards,

Radek

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Radek,

Thanks for chimming in

If I may, I would like to contribute with some answers of my own to your questions...

Regarding your NFS question, We've still to hear back from Vaughn about my last querie:

baselinept wrote:

When you mention failover partner are you referring to the other node and it's capability to assume the failed node identity?

Or, are you refering to some internal mechanism of Ontap that allows a surviving NIC (even without VIF configuration) to assume the IP of the downed interface (i.e the IP of e0 is assured by e1)

Kindest regards,

Now, if we assume that Ontap indeed does not transport the failed IP to the surviving NIC on the FAS, then we're left with no choice but to have etherchannel and/or have 4 NIC's on each FAS controller.

As you know, In VMWARE NFS terms you really can't connect more than one time to the same NFS export/IP combo per Host.

So, if you need to multi-path from the vSphere, you would connect to a different "IP/NFS export" in order to maximize your paths (i.e 2 NIC's on the vSphere host). Off course, we could go higher with IP alias.

Example:

Export A -- IP X on e0

Export B -- IP Y on e1

Therefore, since we have no failover of the IP itself (i.e no VIF), if a NIC fails on the Netapp you would effectively loose connectivity to the failed "IP/NFS export".

Example:

IP X -- Fails

We're left only with "IP Y" and therefore, the NFS "Export A" assigned to "IP X" becomes inacessible.

Hope this makes sense.

Cheers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, it does make sense to me - and is in line with what I always had heard that cross-stack Etherchannel (or equivalent) is a must for multiple active paths.

Let's see what others think about this