ONTAP Hardware

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Hardware

- :

- FAS2552 performance

ONTAP Hardware

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

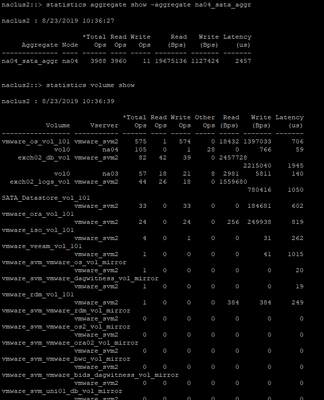

If you look at the screenshot below, you can see that the aggregate na04_sata_aggr appears to be running at around about 4000 IOPS on average. But looking at the IOPS values for the volumes, they add up to well under 1000. So the question is, which stats are right, or maybe, where are the other 3000 IOPS going?

can anyone advise on how to decypher this?

Many Thanks

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Its a very good observation. I am sure this is something, people will observe if they are on cDOT 8.3 onwards.

According to NetApp KBID:1001962

- Volumes that are polled for IOPS in CDOT versions 8.3 and above only show IOPS from the network blade (n-blade) or network IOPS of that cluster.

- Aggregates that are polled for IOPS in CDOT in general only show IOPS from the data blade (d-blade) or disk IOPS of that cluster.

Thus in any CDOT version of 8.3 and above, summing up the volume IOPS values will not equate to the aggregate IOPS values.

Note: In the past (all the way up to CDOT version 8.2), volume Polling for IOPS included the d-blade So at that time, summing up all of the IOPS for volumes contained on an aggregate would match that aggregate’s IOPS.

Hope this information helps.

THanks,

-Ashwin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The aggregate layer and the volume layer are two completely different things. For example you can have 64kB read ops, but you might have writes at the same time, or two volumes reading at the same time, creating a lot of maybe 8k reads on disk. Also you have cpreads, and you can have snapshot creations, deletions, dedupe, and many other background things running on disk.

It is expected to have different IOP values at both layers since ONTAP/FAS/WAFL is way more feature packed than your standard storage device/OS and those features will cause different utilization issues.

Let me know if this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Aswin

but i cant seem to open KBID:1001962 or find it for that matter.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

FYI to view the content you will have to push sign in at the top right, then go back to the KB in question again. Sorry that's something we plan to fix in the near future.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thank you so much its really appreaciated