Tech ONTAP Blogs

- Home

- :

- Blogs

- :

- Tech ONTAP Blogs

- :

- Demystifying MLOps: Part 2

Tech ONTAP Blogs

In part 1 of the MLOps blog series, we discussed challenges associated with making AI projects production ready and how MLOps methodologies can help to automate the ML development life cycle management to make process more efficient. This blog post describes how this can be implemented in your ML projects using GitHub, Kubeflow pipeline, Jenkins, and NetApp AI Data Management platform.

Proceeding further, we will leverage time-series fintech use-case as an example and incorporate ML CI/CD for:

- Automatically packaging and deploying training pipeline initiated by git merge request approval

- Initiating and logging execution of trainings and notifying concerned users about the status

- ML versioning as an integral part of the ML life-cycle workflow for reproducibility, shareability and auditability

- Collaboration within and between ML teams

- Automatically and gradually rolling out new trained model versions on the production server for inferencing triggered by git merge request approval

- On-demand and automatic resource (compute and storage) provisioning based on the requirements

We will be using DJIA 30 Stock Time Series for this use-case. It’s a historic stock data for DIJA 30 companies from 2006-01-01 to 2018-01-01. For each stock (labelled by stock ticker name), it contains open, high, low close, volume, name and date columns. We will train Neural Network to predict the stock sentiment.

Training Phase:

To simply the flow of complex ML process, Kubeflow pipeline provides component-based pipeline which is based on Directed Acyclic Graph and abstracts away underlines complexity. For further details, please visit AI Workflow Automation with Kubeflow and NetApp.

Training pipeline comprises of:

- Consolidate and process data: It is automatically downloading and processing the data from Kaggle and storing that in persistent volume, dynamically created by NetApp Trident. In other use-cases, if the data is coming from different sources, you could use this step to consolidate data before proceeding with the training.

- Training and validation: This component of the pipeline is used for training of the ML model based on consolidated data. You could mention hyper-parameters (like learning rate, batch-size etc.) and other run-time parameters (different datasets, model implementations etc.). ML versioning via NetApp Snapshot, is integrated within the pipeline itself for preserving the state of the experiment, by capturing point-in-time state of data used and associated trained model. You could enable/disable it by changing the flag in the MLOps pipeline configuration.

Inference Phase:

We will be using TFServe as a model serving engine. End-users / applications can interact with the deployed models via rest APIs. TFServe is deployed on Kubernetes, which provides the flexibility of rolling out models without disruption and auto-scaling capability. You could also follow canary or A / B testing deployment strategies for having robust model serving in place.

Apart from TFServe, there are other model serving solutions available, like NVIDIA Triton, KFServe, Seldon etc.

ML CI/CD:

We will be using GitHub for code versioning. Git pull / merge request initiated by Data Scientists and Data Engineers for any change in the code base and configurations, act as requesting the team to accept modifications. Merge request approval goes through a human in the loop (Head of Data Science, Team lead, etc.), ensuring that each change goes through rigorous testing and passes through other gates, like code review, aligns with requested change, model satisfies business objects etc.

In this use-case, we will be leveraging multiple git branches for different phases of the MLOps workflow i.e.

- ds1: Data Scientist, Data Engineers and other developers push their code to this branch, and it acts as main point of interaction with the repository. In your team, if you have multiple members working on the project, you could have one such branch for each team member

- build-components: This branch triggers the automatic building and pushing of docker image to docker repository for all the Kubeflow pipeline components

- kf-pipeline: It is used to compose Kubeflow pipeline and upload to Kubeflow platform

- training: To start the training phase, this branch starts the execution of Kubeflow pipeline. You could pass training hyper-parameters and other run-time parameters via MLOps workflow configuration

- deploy-model: This branch is used to trigger the rollout of trained model to serving engine, in our case, we are using TFServe as a serving engine. If needed, we could also roll-back to previous versions of the model by going back to good known merge request.

- main: This branch acts as the official working branch. All the changes eventually get merged back into this branch to show the current state of the repository.

Once the merge request is approved for a particular branch, Jenkins automatically starts execution of jobs related to the respected branch. You could also include automated tests as part of the Jenkins build process. To handle multiple git branches, Jenkins has a Multibranch Pipeline plugin.

You could use only a single branch and automate the whole process, although we are using Merge Request to have human intervention in place. Every request is sign-off by a real person to ensure ML lifecycle management is auditable and abides by the Data Governance regulations.

Complete MLOps Workflow:

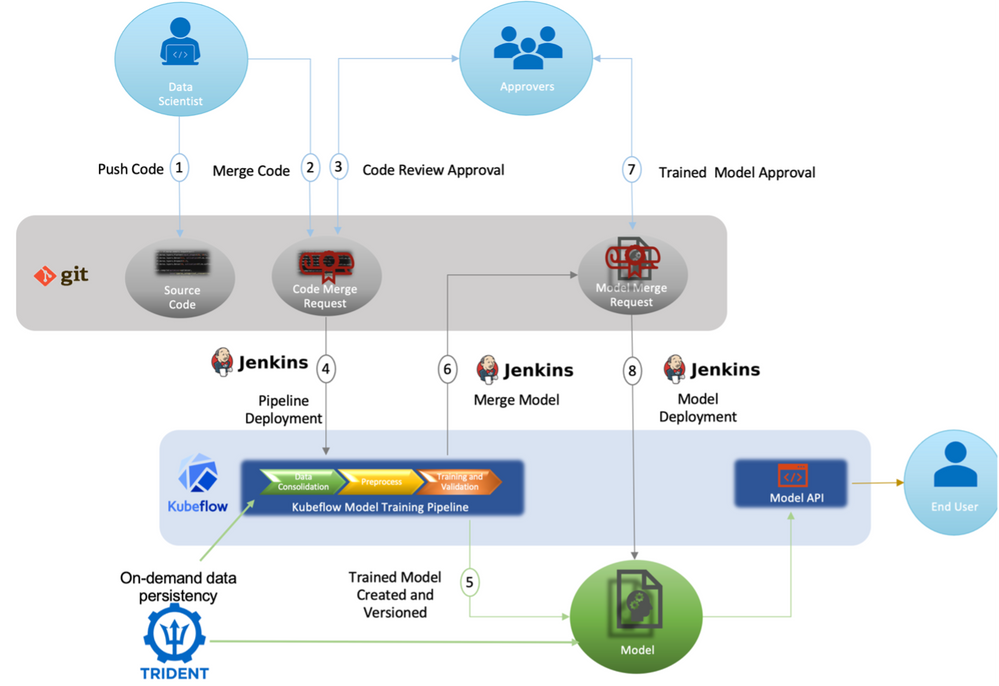

Now it’s time to check in detail how the orchestra of tools and methodologies work together:

The above schema (simplified version) outlines the process, and the flow is depicted by number on the lines as:

- Data Scientists, Data Engineers and ML Engineers write the data processing, ML model and Kubeflow pipeline code and push it to their respective branch i.e., ds1 on the git repository

- He / she creates a pull / merge request on build-components branch, explaining the changes

- This request is going to be reviewed by a designated approver (Team Lead, Senior Data Scientist etc.) for merging

- After pull / merge approval, Jenkins execute the jobs only for build-components branch. It will run few tests and if everything works fine, it will build docker images for Kubeflow pipeline components and push images to docker repository

- ML Engineers creates a pull / merge request on branch kf-pipeline for updating Kubeflow pipeline.

- Approver is going to review and approve the merge request on kf-pipeline branch

- After approver is going to approve the request, Jenkins is going to execute the Kubeflow pipeline compose job and uploads the pipeline to Kubeflow platform. Jenkins is also going to update the kf-pipeline branch with Kubeflow pipeline ID that is going to be generated while uploading the Kubeflow pipeline

- Data Scientist or ML Engineer creates a pull / merge request on training branch for staring training on Kubeflow platform using Kubeflow pipeline. Configuration can be updated for deploying multiple experiments with different set of parameters. Also, we could mention whether we need to automatically create new persistent volume and consolidate / download the data or use existing data by leveraging NetApp Trident persistent volume provisioner.

- Approver is going to review and approve the merge request on training branch

- Following the merge request approval, Jenkins will deploy the training job to Kubeflow as a pipeline run instance

- After the training job is completed, it is going to create a trained model, with associated model metrics (e.g., train / validation accuracy, AUC, etc.). Training data and generated model are snapshotted for ML versioning using NetApp Snapshot and linked with the git commit id that was response for initiating the training job. It helps to preserve the data and model lineage, together with the code and configuration used

- Next, model metrics and trained model reference (volume name and the path where the model is stored) are automatically pushed back to the training branch via Jenkins. If new model satisfies the business requirement (KPIs) than pull / merge request is created on deploy-model branch

- After approver goes through the model evaluation, he / she is going to merge the request to deploy-model branch

- Finally, Jenkins is going to trigger the deployment of newly trained model on the production environment and instruct the TFServe model serving engine to serve the just deployed model without any downtime

Instead of using three branches in between i.e. build-components, kf-pipeline and training, you could use just one branch for building components, composing and uploading Kubeflow pipeline and starting the training on Kubeflow, although that means going through every stage even if there is no change in the state e.g. docker image and Kubeflow pipeline is same, only training parameters (learning rate, mini-batch size, etc.) change or docker images (components) are updated but Kubeflow pipeline and training parameters remain same. Having separate branches makes it easy to keep track of the changes and only trigger relevant jobs.

If you are interesting to try it, don’t miss to check out the Git repository. Also, you could visit NVIDIA GTC 2021 session E31919: From Research to Production—Effective Tools and Methodologies for Deploying AI Enabled Products, there you will find a demo along with the basic walkthrough.

To learn about NetApp AI solutions, visit www.NetApp.com/ai.