Active IQ Unified Manager Discussions

- Home

- :

- Active IQ and AutoSupport

- :

- Active IQ Unified Manager Discussions

- :

- Question on valid command capability

Active IQ Unified Manager Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

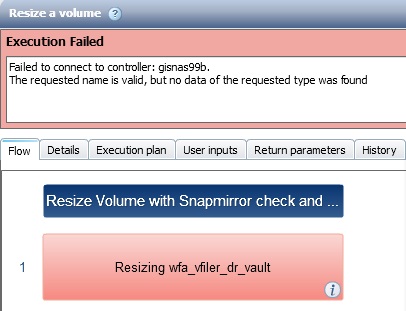

I am trying to create a command to automatically identify a volume with a snapmirror and get the rather generic

Failed to connect to controller: <CONTROLLER>.

The requested name is valid, but no data of the requested type was found

Running workflows directly on this controller work fine and the "Test Connectivity" options returns a successful connection.

This is all 7 mode on WFA 2.1.0.70.32, build 2178337.

I suspect that I cannot connect to one controller execute code, then farther down in the same command connect to a different controller and execute code on that controller. This is basically a port of a powershell script that I am using, with the hopes of getting it into WFA for official logging.

Thanks,

Scott

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Anil,

I found the issue this morning and am posting full details for everyone to see

I was getting this error:

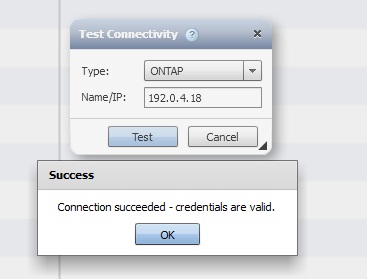

But connection tests succeed:

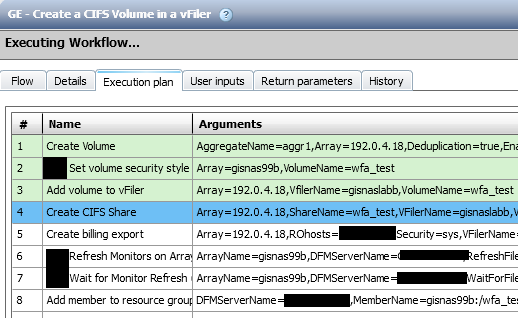

Certain jobs ran without fail, but I found one that did fail:

If you note there are consistency issues between lines. On some lines WFA is using 192.0.4.18(my lab frame IP) and on others it switches to gisnas99b(the lab frame hostname). Any workflow that was entirely IP based passed, anything with hostname failed. I updated my hosts file as that frame has multiple IPs and all is now well. Something for others to note if they have this issue.

-Scott

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Scott,

I strongly feel that it is because of one of the filter that fills up parameters in the workflow.

In WFA 2.1, we had filters like these:

SELECT

aggr.name,

array.ip AS 'array.ip'

FROM

storage.aggregate AS aggr,

storage.array AS array

WHERE

aggr.array_id = array.id

AND array.name = '${array_name}'

In WFA2.2 it has been changed in all filters that use Array to be like this:

SELECT

aggr.name,

array.ip AS 'array.ip'

FROM

storage.aggregate AS aggr,

storage.array AS array

WHERE

aggr.name = '${aggr_name}'

AND aggr.array_id = array.id

AND (

array.ip = '${array_host}'

OR array.name = '${array_host}'

)

Basically, the "array" input can either take IP or hostname.

Since you say that you have tried adding the entry in hosts file, i suspect this is the cause.

Can you successfully test run the command on your controller(Not as part of a workflow)?

If it is only a specific workflow or set of workflows, i guess an ASUP would be needed to help you figure out.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Anil,

I am good to go at this point with the hosts entry. The problem was manifesting because some of the WFA workflows/commands were not using IP and the hosts entry did not exist for gisnas99b. gisnas99a, the source worked without issue because it was listed in the hosts file. The command is still broken, but the workflow is fine now. This was especially confusing because the workflow would work fine with the source/destination reversed because of the way the workflow starts. The workflow calls the source by IP, and the destination is returned within the command by hostname. So 192.0.4.18 => gisnas99a worked as WFA had a hosts entry for gisnas99a, but 192.0.4.17 => gisnas99b failed with that error because gisnas99b was not in the hosts file.

- Scott

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Glad it is working for you scott.

cheers,

Anil