Active IQ Unified Manager Discussions

- Home

- :

- Active IQ and AutoSupport

- :

- Active IQ Unified Manager Discussions

- :

- Wrong QoS counters

Active IQ Unified Manager Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I set up several QoS policies with a limit to INF.

It worked fine for a while (monitored with Harvest).

Yesterday I noticed that some counters are providing nonsense values:

qos statistics performance show -refresh-display true -rows 20 Policy Group IOPS Throughput Latency -------------------- -------- --------------- ---------- -total- 3654789 61343.16MB/s 11.55ms User-Best-Effort 3315730 56823.18MB/s 11.14ms SPLUNK 141786 520.79MB/s 29.78ms _System-Work 51393 6.29MB/s 151.00us T1RESI 44773 1990.19MB/s 323.00us D1ECM 41502 1875.59MB/s 2.53ms saelkes3565 36951 0KB/s 19.66ms W5E 6972 60.17MB/s 6.94ms WE5 3542 10.88MB/s 10.75ms WQ5 3169 10.76MB/s 10.86ms I2I 3132 9.42MB/s 9.24ms W4Q 2124 18.85MB/s 3.35ms WQ4 1487 9.15MB/s 15.28ms T1MODEL 814 3.11MB/s 1029.00us _System-Best-Effort 750 0KB/s 0ms S4D 361 4.63MB/s 725.00us T1INSTRA 250 20.57KB/s 588.00us MDMT 18 36.00KB/s 1.73ms TEO 13 0KB/s 149.00us P1ACRMDB 12 16.00KB/s 0ms T1WFM 6 16.00KB/s 777.00us

The whole node is doing 61GB/s? With SATA ![]()

saelkes3565 is limited to 1000iops (100% getattr).

SPLUNK is limited to 1000iops (90% getattr).

In Grafana I see most of the time no values for the nonsense counters.

We are running 8.3.1P1 here.

The cluster is heavy loaded, is this the problem?

Marcus

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @marcusgross

I haven't seen this strange behavior from the CLI statistics command before and they should be accurate regardless of cluster load. Could it be that you have nested QoS policies defined? So maybe a policy applied at SVM level and then also volume or lun/file? Such a config is not supported and might cause oddness like this.

For data not showing up in Grafana, if it is very low IO it could be the latency_io_reqd feature is kicking in. See here for more on it:

Sorry I don't have a better answer. If the problem persists I recommend to open a support case.

Cheers,

Chris Madden

Storage Architect, NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO or both!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Chris,

we don't have nested Qos groups.

I open a ticket towards Netapp.

Marcus

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

sometimes the QoS counters showing the right values, sometimes not. OCPM works well.

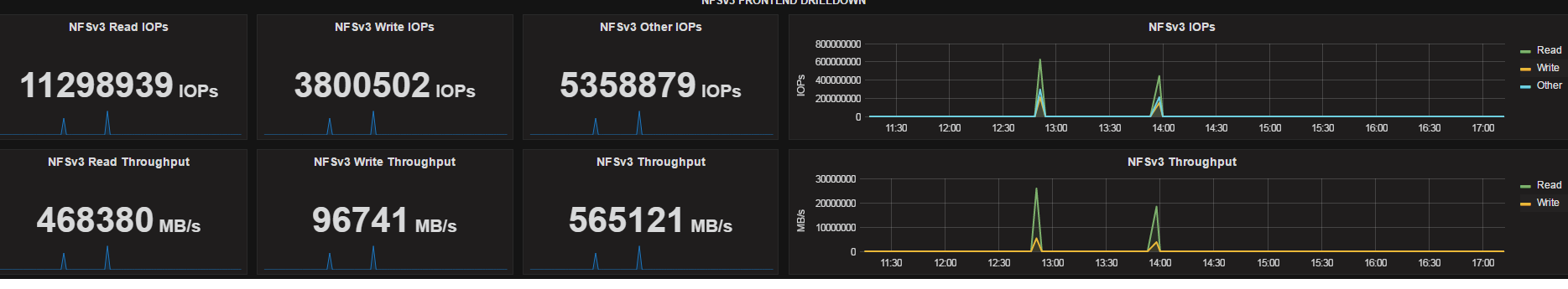

I also noticed that there are some spikes on normal Harvest counters:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @marcusgross

According to the Counter Manager system documentation counters must be monotomically increasing, or in other words it must only increase. It's kind of like the odometer in a car; you check the value, wait a bit, check it again, and calculate the rate of change from the time passed and the change in the odometer. If the odometer goes backwards, well, that doesn't happen unless you are up to no good.

Anyway, back to ONTAP, if you ever check and the rate of change is negative you are then to assume a reset occurred, likely from a rollover of the counter (i.e. it reached the max size of the data type) or a reset (like a system reboot). In this case you drop the negative sample and on the next one you can compute your change again.

When I see the massive numbers like in your screenshot it appears if the values went down temporarily, so something like this:

Time: T1 T2 T3 T4

NFS OPS: 122400, 123400, 100, 123600

Calc'd: N/A , 1000, -123300 (discard), 123500

I've seen it before sporadically at customer sites but haven't had enough to open a bug. If you run Harvest with the -v flag it will record all the raw data received and we can verify this behavior. Next to figure out is what system event caused it. Did anything happen at those timestamps? SnapMirror updates maybe? Cloning?

OPM uses archive files from the system which is a different collection method. It also uses presets which are less granular. Since this is a timing issue I could imagine that those differences somehow avoid the problem.

Cheers,

Chris Madden

Storage Architect, NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO or both!