ONTAP Hardware

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Hardware

- :

- cifs capacity shows full in ontap manager

ONTAP Hardware

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Ladies and Gentlemen,

I have a CIFS share that I started using for my backups. I noticed that one of my backups was eating up all the storage in the shares thus I deleted the backup by going mapping to the CIFS share from a windows computer. Now it seems that even though I deleted that backup the CIFS share didn't reclaim the 5 TB storage space the computer was using. Please is there something i can do to reclaim my space... thank you

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the output, just reading the key elements:

Filesystem Size: 5TB

Total User-Visible Size: 4.75TB

Used Size: 4.75TB

Volume Size Used by Snapshot Copies: 4.71TB

You have correctly high-lighted the two snapsots which are consuming the most space:

hourly.2020-05-26_1005 3.21TB 64% 92%

hourly.2020-05-26_1105 555.3GB 11% 65%

There is a handy-command called "compute-reclaimable" which calculates the volume space that can be reclaimed (before actually deleting) if one or more specified Snapshot copies are deleted. However, it heavily uses system’s computational resources so it may reduce the performance for client requests and other system processes while it does the calculation.

::> volume snapshot compute-reclaimable -vserver ccb -volume ccb_CIFS_volume -snapshots hourly.2020-05-26_1005

If you delete those two snaps (which seems to be holding up the majority of the volume's space) you should be able to free up space.

Just on the side note - Does this volume have a replication partner (Vault) at another location, just in case if there is any particular file in that lot which might be needed, the other option in case you do not want to delete snaps then increase the space provided there is enough space on the aggregate.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Most likely the space you have freed from the 'front-end' (By deleting files) has gone into the 'snapshot' area. So, please login to the NetApp storage.

Using GUI/cmd, Login to NetApp:

1) Go the volume hosting the share.

2) Go to snapshot tab.

3) Take a look at the snapshots, you will see delete files-space in there.

Now, if you delete 'snaps', you should be able to create space from the storage side, and it will also show up on the front-end windows side.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for your response. I'm a little confused as to how this thing works. My volume is 5TB and my snapshot is 5%(500GB) of the volume. How can the snapshot consume 5% if it is only 500GB of 5TB?

Also, there is about 18 snapshot there. Am I suppose to delete all of them to reclaim the space in the backend?

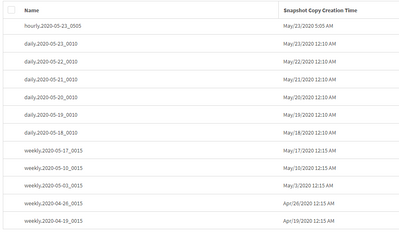

I'm using thick provisioning and i attached a snapshot of the logs

.

Thank you in advance for your explanation and understanding.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry...I forgot to ask which Ontap version you are ruining (These days I assume everyone to be on cDOT).

If it's cDOT, please give this output:

::> volume show -vserver <vserver_name> -volume <volume_name> -fields total,used,available

::> snapshot show -vserver <vserver_name> -volume <volume_name>

If it's 7-mode:

filer> df -hg <volume_name>

filer> snap list <volume_name>

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for you prompt message. I believe i'm using the CDOT -- since those command work for that version

Below are the outputs you asked for :

Out no. 1

volume show -vserver ccb -volume ccb_CIFS_volume

Vserver Name: ccb

Volume Name: ccb_CIFS_volume

Aggregate Name: Data

List of Aggregates for FlexGroup Constituents: Data

Encryption Type: none

List of Nodes Hosting the Volume: MorganLib-01

Volume Size: 5TB

Volume Data Set ID: 1065

Volume Master Data Set ID: 2161634235

Volume State: online

Volume Style: flex

Extended Volume Style: flexvol

FlexCache Endpoint Type: none

Is Cluster-Mode Volume: true

Is Constituent Volume: false

Export Policy: default

User ID: -

Group ID: -

Security Style: ntfs

UNIX Permissions: ------------

Junction Path: /ccb_CIFS_volume

Junction Path Source: RW_volume

Junction Active: true

Junction Parent Volume: ccb_root

Comment:

Available Size: 0B

Filesystem Size: 5TB

Total User-Visible Size: 4.75TB

Used Size: 4.75TB

Used Percentage: 100%

Volume Nearly Full Threshold Percent: 95%

Volume Full Threshold Percent: 98%

Maximum Autosize: 6TB

Minimum Autosize: 5TB

Autosize Grow Threshold Percentage: 98%

Autosize Shrink Threshold Percentage: 50%

Autosize Mode: off

Total Files (for user-visible data): 21251126

Files Used (for user-visible data): 149

Space Guarantee in Effect: true

Space SLO in Effect: true

Space SLO: none

Space Guarantee Style: volume

Fractional Reserve: 100%

Volume Type: RW

Snapshot Directory Access Enabled: true

Space Reserved for Snapshot Copies: 5%

Snapshot Reserve Used: 1885%

Snapshot Policy: default

Creation Time: Tue Mar 31 12:13:18 2020

Language: C.UTF-8

Clone Volume: false

Node name: MorganLib-01

Clone Parent Vserver Name: -

FlexClone Parent Volume: -

NVFAIL Option: off

Volume's NVFAIL State: false

Force NVFAIL on MetroCluster Switchover: off

Is File System Size Fixed: false

(DEPRECATED)-Extent Option: off

Reserved Space for Overwrites: 0B

Primary Space Management Strategy: volume_grow

Read Reallocation Option: off

Naming Scheme for Automatic Snapshot Copies: create_time

Inconsistency in the File System: false

Is Volume Quiesced (On-Disk): false

Is Volume Quiesced (In-Memory): false

Volume Contains Shared or Compressed Data: true

Space Saved by Storage Efficiency: 0B

Percentage Saved by Storage Efficiency: 0%

Space Saved by Deduplication: 0B

Percentage Saved by Deduplication: 0%

Space Shared by Deduplication: 0B

Space Saved by Compression: 0B

Percentage Space Saved by Compression: 0%

Volume Size Used by Snapshot Copies: 4.71TB

Block Type: 64-bit

Is Volume Moving: false

Flash Pool Caching Eligibility: read-write

Flash Pool Write Caching Ineligibility Reason: -

Constituent Volume Role: -

QoS Policy Group Name: -

QoS Adaptive Policy Group Name: -

Caching Policy Name: auto

Is Volume Move in Cutover Phase: false

Number of Snapshot Copies in the Volume: 17

VBN_BAD may be present in the active filesystem: false

Is Volume on a hybrid aggregate: true

Total Physical Used Size: 5TB

Physical Used Percentage: 100%

Is Volume a FlexGroup: false

SnapLock Type: non-snaplock

Vserver DR Protection: -

Enable Encryption: false

Is Volume Encrypted: false

Volume Encryption State: none

Encryption Key ID:

Application: -

Is Fenced for Protocol Access: false

Protocol Access Fence Owner: -

Is SIDL enabled: off

Over Provisioned Size: 0B

Available Snapshot Reserve Size: 0B

Logical Used Size: 4.75TB

Logical Used Percentage: 100%

Logical Available Size: -

Logical Size Used by Active Filesystem: 293.7GB

Logical Size Used by All Snapshots: 0B

Logical Space Reporting: false

Logical Space Enforcement: false

Volume Tiering Policy: none

Performance Tier Inactive User Data: -

Performance Tier Inactive User Data Percent: -

Output of secound command

Vserver Volume Snapshot Size Total% Used%

-------- -------- ------------------------------------- -------- ------ -----

ccb ccb_CIFS_volume

weekly.2020-04-19_0015 5.23GB 0% 2%

weekly.2020-04-26_0015 19.32GB 0% 6%

weekly.2020-05-03_0015 24.72GB 0% 8%

weekly.2020-05-10_0015 22.49GB 0% 7%

weekly.2020-05-17_0015 1.21GB 0% 0%

daily.2020-05-18_0010 9.91MB 0% 0%

daily.2020-05-19_0010 16.49MB 0% 0%

daily.2020-05-20_0010 201.6GB 4% 41%

daily.2020-05-21_0010 1012KB 0% 0%

daily.2020-05-22_0010 868KB 0% 0%

daily.2020-05-23_0010 560KB 0% 0%

hourly.2020-05-23_0505 472KB 0% 0%

hourly.2020-05-23_0605 654.5GB 13% 69%

hourly.2020-05-26_1005 3.21TB 64% 92%

hourly.2020-05-26_1105 555.3GB 11% 65%

hourly.2020-05-26_1205 52.03GB 1% 15%

hourly.2020-05-28_1005 2.40MB 0% 0%

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the output, just reading the key elements:

Filesystem Size: 5TB

Total User-Visible Size: 4.75TB

Used Size: 4.75TB

Volume Size Used by Snapshot Copies: 4.71TB

You have correctly high-lighted the two snapsots which are consuming the most space:

hourly.2020-05-26_1005 3.21TB 64% 92%

hourly.2020-05-26_1105 555.3GB 11% 65%

There is a handy-command called "compute-reclaimable" which calculates the volume space that can be reclaimed (before actually deleting) if one or more specified Snapshot copies are deleted. However, it heavily uses system’s computational resources so it may reduce the performance for client requests and other system processes while it does the calculation.

::> volume snapshot compute-reclaimable -vserver ccb -volume ccb_CIFS_volume -snapshots hourly.2020-05-26_1005

If you delete those two snaps (which seems to be holding up the majority of the volume's space) you should be able to free up space.

Just on the side note - Does this volume have a replication partner (Vault) at another location, just in case if there is any particular file in that lot which might be needed, the other option in case you do not want to delete snaps then increase the space provided there is enough space on the aggregate.

Thanks!