ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- About performance differences(RAID/Aggregate)

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

I'm currently designing the following configuration, but I can't judge how much performance will be affected by the design content, so if you have any information, please cooperate.

The configuration is as follows.

Model number: AFF250

Expansion port: MEZZANINE 4-Port 25GbE x2

Disk: 7.6TB, NVMe, SED x18

I am considering one of the following configurations.

■ Composition 1

Number of Aggregates: 1

RAID: 14D + 3P + 1 spare (RAID-TEC)

■ Composition 2

Number of aggregates: 2

RAID: 6D+2P +2spare(RAID-DP)

In the case of configuration 1, since there is only one aggregate, only one node will be the disk owner, and it is assumed that it will actually be an Active-Standby operation.

For configuration 2, create one aggregate per node.

However, this falls below the recommended range of 12 to 20 disks in a RAID group, so I'm concerned that it might affect performance.

For this application,

・VMware DataStore: 10TiB (used for NFS connection, virtual machine & VDI)

・file server: 20TiB (CIFS/NFS)

・profile server: 1TiB (CIFS/NFS)

Snapshot area is under consideration

Configuration 1 has more effective capacity, but I'd like to hear your opinion on which one is better from a performance perspective.

thanx

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Composition 3

you WILL be using ADP.

You WILL have 36 data partitions (18 per node)

you should place one aggregate on each node.

node 1: raid-dp, raid size 17, 15d + 2p + 1spare

node 2: raid-dp, raid size 17, 15d + 2p + 1spare

place an NFS data store for esx on each aggregate.

in VMware, place then into a storage drs cluster with manual movement (bit auto initial placement). Turn off the full auto mode. As you place vms in there they will be distributed by VMware between both data stores. I lack the vdi knowledge of this same method will work. You may need to create a couple of dedicated volumes for vdi (which usually takes advantage of ONTAP cloning)

for the CIFS server, make a flexgroup

make a single volume for the profile server

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ontap version is 9.11.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey yxmretk, Neither are practical for an A250 w/18 disks as a config and I think you're forgetting ADPv2 (Root-Data-Data https://docs.netapp.com/us-en/ontap/concepts/root-data-partitioning-concept.html). I'll also note that "disk" count is (a lot) less of a concern on AFF than FAS/spinning drives.

For an A250 w/7.6TB x 18 -

Do a default deploy and you'll have 2 data aggrs, one per node. (as well as 2 root aggrs, one per node)

Each Data Aggr will be 46.96TiB w/17 disks (15 data 2 parity)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Composition 3

you WILL be using ADP.

You WILL have 36 data partitions (18 per node)

you should place one aggregate on each node.

node 1: raid-dp, raid size 17, 15d + 2p + 1spare

node 2: raid-dp, raid size 17, 15d + 2p + 1spare

place an NFS data store for esx on each aggregate.

in VMware, place then into a storage drs cluster with manual movement (bit auto initial placement). Turn off the full auto mode. As you place vms in there they will be distributed by VMware between both data stores. I lack the vdi knowledge of this same method will work. You may need to create a couple of dedicated volumes for vdi (which usually takes advantage of ONTAP cloning)

for the CIFS server, make a flexgroup

make a single volume for the profile server

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for your prompt reply.

I am aware of the following two responses.

raid-dp, raid size 17, 15d + 2p + 1 spare (per node)

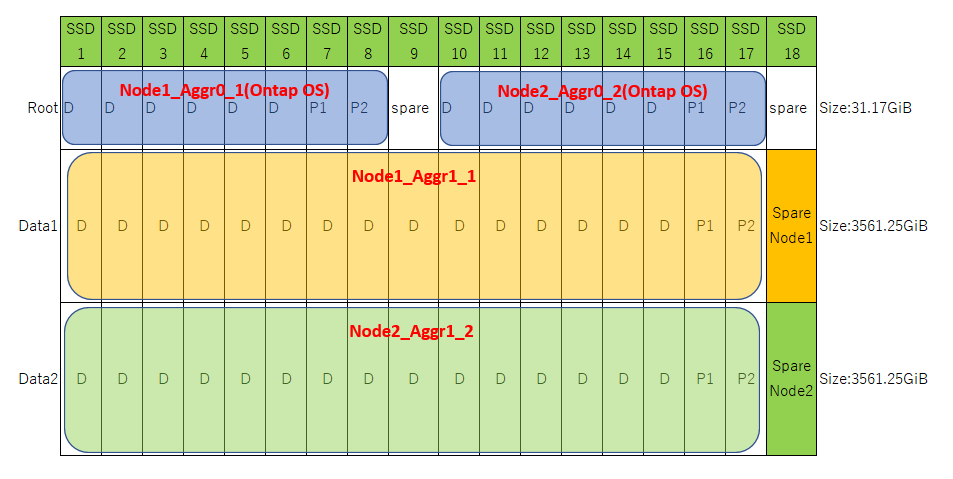

I wrote it in the diagram once, but is it recognized?

Since this time it is AFF, I knew that it would be the Root-Data-Data partition.

However, please let me confirm if there are two misunderstandings on my part.

・Is it possible to create one RAID group by mixing Data1 and Data2 partitions?

(From your answer, it's possible, but...)

・In the case of this configuration, in the case of a logical failure of one partition,it will switch to a spare partition, but for example SSD In the case of a physical failure of one SSD (this seems more likely),

I think that two partitions of the Data Aggregate will fail, so IO processing will be performed using the parity area,

so performance will not be greatly affected. Isn't it?

In the case of SSD, wouldn't it be such a big degeneracy?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is it possible to create one RAID group by mixing Data1 and Data2 partitions? - No, Not the same RG. Splitting it in to two RG on the same aggr doesn't give any real benefit either.

I wouldn't worry about any perf impact due to a nvme drive failing. Don't try to over engineer this system, and go with defaults.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I realized I misunderstood...

Is this picture correct?

In this case, it is possible to create an aggregate with 15D + 2P Spare1 without mixing data1 and data2 partitions.

Excuse me···

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is not only possible, this is the recommended layout. Please note, Fusion would lay it out this way and always comes as the recommended disk layout for future questions like this. You don't have to lay things out manually. Fusion will do it for you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for your prompt and accurate reply.

I understand.

I will proceed with the design based on the answers I received.

I am glad that many people across the sea have helped me.

I love this community!