ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- Is Netapp ONTAP Select certified to run VM workloads on NFS datastore ?

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Is Netapp ONTAP Select certified to run VM workloads on NFS datastore ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is Netapp ONTAP Select certified to run VM workloads on NFS datastore ?

My query is that since we have multiple products from Netapp which are used in Virtualization environment, there has to be some vendor certification process before recommending any solution for VM workloads. So do we have any kind of vmware validated design document which states that we can use NFS services to create NFS datastores using Netapp OTS Appliances to run virtual machines ? And even if such document exist, is this solution practically suitable in Data-center Environments ?

Are these SDS solutions (Software Defines Storage Appliances) capable of handling Block level operations required by VMware ESXi Hypervisors in clustered environment, using protocols like iSCSI.

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for clarification.

So to summarize in order to ESXi Cluster to work , we have use native HA in OTS using combination of JBOD and software RAID. And also this will not impact the ESXi cluster HA functionality as the NFS share in this case will be treated as external storage since ESXi will not be having the storage visibility and the underlying disk will be directly mapped to OTS nodes using passthrough / VM direct path IO for the OTS nodes hosted on the ESXi Hypervisor.

Also I have read in the best practices document that the Large size OTS nodes only work on software RAID and there is no option of Hardware RAID.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I doubt there's need for certification of h/w.

OTS supports VMware ESXi 7.0 and that's in IMT.

If a customer buys a VMware-certified server, they have a supported environment.

> And even if such document exist, is this solution practically suitable in Data-center Environments ?

It depends.

If someone wants to use OTS to make a DIY A900, that won't happen.

If they want to provide NFS or iSCSI storage to dozens or hundreds of VMs, that can work fine, and multiple nodes can be deployed to scale that to larger numbers and hundreds of TBs. That's how people use it in real life.

OTS can consume external or internal block storage, so with external storage and sufficient CPU and memory resources it's not that different from entry to mid-range appliances.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for reply, but I still have below doubts.

Please find the reply for below points :

OTS supports VMware ESXi 7.0 and that's in IMT ==>In the installation guide Its only mentioned that it can run on ESXi Hypervisor, nowhere its mentioned that it will support loopback NFS datastore mount on the base ESXi Host on which it is running.

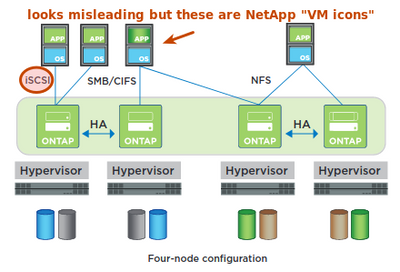

If a customer buys a VMware-certified server, they have a supported environment ==> Here I am not talking about the hardware compatibility with ESXi, I am just mentioning the OTS compatibility with ESXi for NFS datastore. I am attaching the OTS Datasheet for reference. In the Datasheet diagram its only showing Guest OS VM's using the File level protocols. Its not mentioned whether we can use these protocols directly within the Hypervisor to Host VM workloads.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's not a "loopback" datastore, the use of switches (vSwitches) is mandatory.

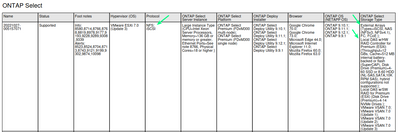

The IMT shows iSCSI and NFS clients (VM and non-VM) are supported:

The data sheet uses standard NetApp "VM icon", so the diagram looks misleading but it indicates these are VMs (on same or different nodes, but can be on another vSphere cluster as well).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for explanation, but could you please elaborate when you say non-VM client. Does non-vm means external physical hosts which are not part of the vCenter Infra.

Also I have gone through the Ontap Select 9 installation and Cluster deployment guide.

https://library.netapp.com/ecm/ecm_download_file/ECMLP2570999

In above document under planning section (Page number 12) its described that we have only two options for deploying OTS nodes.

Dedicated versus collocated

From a high level, you can deploy ONTAP Select in two different ways regarding the workload on the hypervisor host server.

Dedicated deployment

With the dedicated deployment model, a single instance of ONTAP Select runs on the host server. No other significant processing runs on the same hypervisor host.

Collocated deployment

With the collocated deployment model, ONTAP Select shares the host with other workloads. Specifically, there are one or more additional virtual machines typically running computational

applications. These compute workloads are tightly coupled with the local ONTAP Select cluster so that overall system performance can be improved. This hyper-converged

infrastructure (HCI) model supports specialized applications and packaging requirements. As with the dedicated deployment model, each ONTAP Select virtual machine must run on a separate and dedicated hypervisor host.

And also I can see that OTS nodes have CPU and Memory reservations on the ESXi Hypervisor Hosts. So in this case would you still recommend to use the OTS nodes with other VM's running on the same physical ESXi host while sharing the existing compute with OTS appliance. Wouldn't it have an impact on other application VM's which are running alongside OTS Appliance which is also acting as a Virtual storage appliance with dedicated reserved resources on the ESXi Host ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OTS iSCSI disks or NFS shares are generic block devices and file shares, and OTS itself has no way of knowing if a client is running on the ESXi host, same cluster but different host, or elsewhere (another cluster, or some physical server).

As far as I know as long as IMT lists it as supported, it's supported as both physical or virtual OS (after all, IMT doesn't separately list "VM" version of any OS as such).

Yes, reservations are required to avoid resource squeezes and (consequently) I/O timeouts and failures of VMs/apps using OTS.

But that doesn't mean other apps cannot have reservations, or that OTS must hog the entire ESXi node.

Let's say each ESXi host has 64-cores. We could do something like:

- 16 cores fully reserved for OTS VM

- 8 cores fully reserved for Oracle DB VM

- 4 Oracle app VMs - maybe give them 8 cores in a cluster-wide Resource Pool

- the rest (64-(16+8+8)) can use no reservation or some combination of the above

If DRS is enabled and there's a cluster resource pool, depending on DRS settings the apps might move around to other nodes if need be.

In this case, because OTS fully reserves just a fraction of CPU resources (16 out of 64), there's an impact in the sense that "everything else" can use up to 48 vCPUs, but those can be managed in terms of resource allocation and for non-DB apps it's even OK to move them around on demand. If you allocate (fully or shares) resources to other workloads, they'll still work as they would on a 48 vCPU ESXi (given than 16 vCPUs were allocated to OTS).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Above explanation looks quite convincing but still I believe that there would be some issues while running OTS Cluster on ESXi Cluster. Lets take a scenario where we are running two node OTS Cluster on top of two node ESXi Cluster.

Now since we can have only one OTS node per ESXi host and also since the OTS system disk is running on ESXi local datastore so in this case both the OTS nodes will be tied to their respective ESXi host. Even DRS cannot move these OTS nodes which are in OTS cluster as they are not deployed on shared datastore.

As you might be knowing that whenever we create OTS cluster we also have something called as RAID sync mirror. So for example if I am using a Hardware RAID instead of software RAID for OTS deployment , in that case RAID operations will be managed by underlying physical Hosts. Also if I am using 10 disks (3TB each) on one Physical Server and 10 on other server than the total space which I should get would be 3x10 = 30 TB for each Server. Now lets assume I have configured RAID 5 using physical RAID controller than the usable space would be roughly 20 TB each Server.

Out of this 20 TB + 20 TB on both the server , after RAID sync mirror , it will cut down to 40 TB / 2 = 20 TB usable. Now again there would be some overhead of Netapp file system (WAFL) after creating the aggregates , lets assume - 10 %, so again the effective usable space which I would get will limit to 18 TB for entire two node OTS Cluster (excluding ESXi OS and OTS system disk , as that would be running on M2 NVME boot disks in seperate RAID 1 boot volume).

Now if I go ahead and carve out the NFS share volume and share it across these two ESXi Hosts and create the two node ESXi cluster after creating the OTS cluster, How will the ESXi cluster HA will work, assuming that the OTS cluster is running in Active / Passive mode ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you run a single OTS node (non-HA as far as ONTAP is concerned), then it cannot rely on VMware HA if it uses DAS (local) storage, but if it uses external storage (one of those posted in the IMT screenshot above) then it can be restarted (so, storage crash- or reboot-like experience for unplanned downtime).

If ONTAP HA is required, then data is mirrored across network to the partner as explained in the OTS docs (second quote below). The HA partner can be located remotely (I haven't pasted that link here, but you can find that in MetroCluster SDS section).

Yes, the native HA approach leaves you with > 50% of usable (approximately 50% of "visible to OTS" if h/w RAID is used on DAS/External Storage, and less than 50% of visible if ONTAP RAID is used, which would be done if you have JBOD storage, for example). The overhead is high compared to AFF appliances, but on the other hand one can use commodity storage parts.

--

ONTAP Select breaks up the underlying attached storage into equal-sized virtual disks, each not exceeding 16TB. If the ONTAP Select node is part of an HA pair, a minimum of two virtual disks are created on each cluster node and assigned to the local and mirror plex to be used within a mirrored aggregate.

https://docs.netapp.com/us-en/ontap-select/concept_stor_hwraid_local.html#virtual-disk-provisioning

The ONTAP HA model is built on the concept of HA partners. ONTAP Select extends this architecture into the nonshared commodity server world by using the RAID SyncMirror (RSM) functionality that is present in ONTAP to replicate data blocks between cluster nodes, providing two copies of user data spread across an HA pair.

https://docs.netapp.com/us-en/ontap-select/concept_ha_mirroring.html

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for clarification.

So to summarize in order to ESXi Cluster to work , we have use native HA in OTS using combination of JBOD and software RAID. And also this will not impact the ESXi cluster HA functionality as the NFS share in this case will be treated as external storage since ESXi will not be having the storage visibility and the underlying disk will be directly mapped to OTS nodes using passthrough / VM direct path IO for the OTS nodes hosted on the ESXi Hypervisor.

Also I have read in the best practices document that the Large size OTS nodes only work on software RAID and there is no option of Hardware RAID.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Right, for larger nodes ONTAP's own (s/w) RAID is mandated. Alternatively, use smaller OTS nodes and h/w RAID, the main difference is it gives you more smaller nodes and/or more namespaces.

If you have 3 ESXi nodes, you can run 2 big OTS just fine, but you can't run 4 smaller OTS nodes on 3 ESXi in the same balanced way.

Or, running 2 big OTS nodes on two big ESXi may be more or less attractive to running 4 smaller OTS nodes on four smaller ESXi, depending on situation.

If you want ONTAP HA that works very similarly to ONTAP dual-controller appliances, then OTS should be deployed in HA pairs. There are users who run them that way (even stretched, in MetroCluster SDS configuration), but there are also users who run singleton OTS VMs with disks on external FC target - they save cost and do not target the same level of uptime that ONTAP HA pairs provide, which may be okay for non-critical workloads that can't justify the cost of HA.