ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- New Cluster creation fails on FAS8060

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I have a single chassis dual controller FAS8060 that I am trying to create a brand new cluster with. I've done this plenty of times and this is something I've never seen. Whenever the the creation process starts I get the following error..

Trying to create cluster again as previous attempt failed. Error: Unused cluster ports exist in the cluster. One or more ports may not be in the "healthy" state, or may be incorrectly assigned to the "Cluster" Ipspace. Run "network port show -ipspace Cluster" to view the cluster ports. Correct any issues, and then try the command again.

I've been researching this and have tried everything that could possibly be the issue and it has either been setup the correct way already or I have corrected the issue. All the ports seem to be fine except that all the ports are "blank" where it should say healthy or degraded. Also, this was previously a a dual chassis unit that has been requested to consolidate into a single chassis unit. I did already confirm that the psm-cc-config? variable is set to true and the the ha-config on both nodes in maintenance mode is set to HA. Also just to add this is a switchless unit, which I was able to set in both nodes in the advanced priv. Anyone ever seen this problem before? I can't find anything like it anywhere in my searching other than the couple of things I listed. Sorry for the long post but this is driving me crazy especially because I know it's probably something simple I'm missing.

Thanks

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Update for you : I spun up a new Ontap simulator and discovered that until the cluster is formed, the Port's Health Status is not updated (a dash '-' is displayed as seen in the attached pic). Hence, your situation is normal and don't think it has anything to do with chassis, so we can rule that out.

Also, in the previous commands I mentioned '-role' switch does not apply.

Please try these commands:

Step1:

::> network port modify -node local -port e0b -ipspace Default

::> network port modify -node local -port e0d -ipspace Default

I believe when you do cluster setup now (e0b & e0d will not participate in the cluster setup).

Step2:

::> cluster setup

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

are the e0M ports connected / up?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yep, they are. Also have an ethernet port connected for Cluster management when I;m able to set it up as well as the interconnect ports e0a and e0c on each controller.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

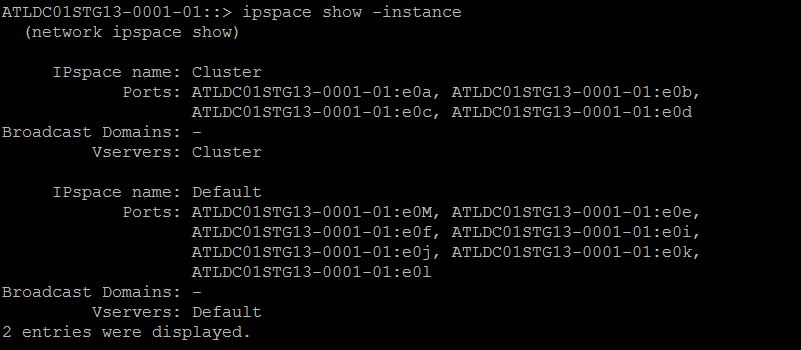

Can you post the output of the following:

net port show

ipspace show -instance

broadcast-domain show

And just to confirm, each controller has been re-initialized (or factory new) and this is running cluster setup on the first node.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, re-initialized fresh new load of 9.5P8 and this is the cluster setup on the first node. 2nd node is at the cluster setup wizard screen. Below is the output from the first node. Below is screenshots of the first 2 commands but the 3rd command isn't a recognized command, even in advanced mode. Thanks for your help I really appreciate it. I won't have constant access to this system until Monday morning but I will provide any information that I can during the weekend. Also, not sure if it matters but this system was using option 9 from the boot menu allowing the use of whole disks and disabling ADP. Thanks again for your help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's probably crabbing about e0b and e0d being down. I would either cable them up and have 4 cluster lifs per controller, or remove them from the Cluster ipspace on both controllers. (if you have the cables, just cabling them up is easier) but if you need those ports for data later, let me know I can point to you the commands to remove them and clean it up.

The reason I asked about e0M first is that this can happen if you're trying to create a cluster without e0M being up. (looping them together is a good trick!)

The last is a valid command, but probably not recognized where you're at the cluster setup.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have tried it multiple times with them all 4 cabled up and just the e0a & e0c as well as those with FC loopbacks in the other slots. Any chance this has something to do with combining a dual chassis unit into a single chassis dual controller unit? I've done tons of research and set everything up how it should be but I just feel like I'm missing something. And I can't find that specific error anywhere online. Really strange.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

It's strange, normally ports health status is either 'Healthy or degraded''. It could be related to what you already suspect.

Could you try these steps: Modify the cluster ports (e0b & e0d) to '-role data'.

1) exit setup wizard

2) ::> network port modify -node local -port <e0b> -role data

::> network port modify -node local -port <e0d> -role data

3) Run setup again:

::> cluster setup

Check if the setup go past that error.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the replies. I won't be at the system until Monday morning. I may be able to remote into it but I'm not sure. I'll definitely give all your steps a try and report back. Thanks to all for the replies.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Update for you : I spun up a new Ontap simulator and discovered that until the cluster is formed, the Port's Health Status is not updated (a dash '-' is displayed as seen in the attached pic). Hence, your situation is normal and don't think it has anything to do with chassis, so we can rule that out.

Also, in the previous commands I mentioned '-role' switch does not apply.

Please try these commands:

Step1:

::> network port modify -node local -port e0b -ipspace Default

::> network port modify -node local -port e0d -ipspace Default

I believe when you do cluster setup now (e0b & e0d will not participate in the cluster setup).

Step2:

::> cluster setup

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you so much. That seems to have done the trick, at least it did create the cluster and now up to the adding the license keys part. Do you think the same thing will need to be done to add the 2nd node to the cluster, or should I just go ahead and set it up like that so the ipspace will be the same as far as both nodes go. Thanks again for your help. I've never experienced this and it was driving me absolutely crazy. I'll follow up when completed or if, god forbid there are any other issues.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

They will need to match, so probably, yes. Check to see if it also wants b and d as cluster ports (i'm guessing yes)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks. I know they were labeled as Cluster ports on the 2nd node as well when I was doing my initial troubleshooting on this system. Thanks again for all the replies from everyone helping me out it is very much appreciated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Another tip when creating the cluster....

If you know which ports are good, when running cluster setup and you get the question about "are these interfaces ok". just say no and list the ones that are good. Since it thinks the other two ports are down, it fails. You can always add the cluster ports in later.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cool, thanks for the tip. It was a really strange situation especially when I had all 4 of them set up as interconnect ports which I found was actually recommended by NetApp on a page I found while troubleshooting the issue. As I usually only see it being e0a & e0c on this type of system.

Well, I now have the Cluster up and running with both nodes with no current issues. Thanks again to the help from everyone.