ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- Unpartition drives on AFF700

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can someone let me know how to unpartition drives on AFF700.

I want to use those drives on the other AFF700 system.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Decommissioning an A700 and want to use the shelves on another?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi - I just want to unpartition couple of drives and use those drives in other AFF700 system to replace the failed disks.

The system on which i want to unpartition has no data on it - except root volume & its ls volume.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When removing "Just a couple of disks" make sure that the A700 still has the minimum to boot/function and leave enough spares - hwu.netapp.com And make sure you're not impacting existing aggrs.

There is an unpartition command in Maint Mode, that's typically where I do it from.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have plugged out 1 drive & immediately i received 2 alerts - 1 was cluster has lack of spares & other was RAID status is reconstructing. Then we put the drive back.

I have 2 AFF & it has 2 aggregates.

Can you help me with steps & commands.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry, thought it was understood that all parts of the drive need to be unowned before you can remove partitions. "make sure you're not impacting existing aggrs. "

You will probably have to delete any data aggrs, and then remove the drives that contain root spares.

How many drives total on the A700?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I gave disk show on the cluster - below is the info I got

Info: This cluster has partitioned disks. To get a complete list of spare disk capacity use "storage aggregate show-spare-disks".

All are shared

node1 - 24 disks

node2 - 24 disks

To check spare - I checked from invidual node with sysconfig - r command - Below are the observations.

node -1 syconfig -r

Pool1 spare disks (empty)

Pool0 spare disks -> 9

node -2 syconfig -r

Pool1 spare disks (empty)

Pool0 spare disks -> 18

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

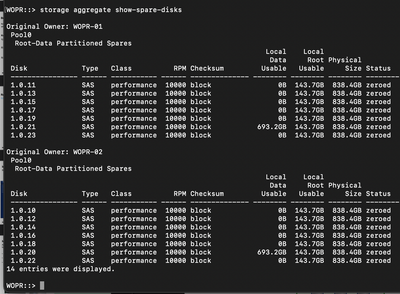

Storage aggr show-spare-disks should list out what spares you have.

here's an example from my home lab:

Maybe just wiping the system and removing the drives and then recreating the cluster would be easier?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So I have 4 node cluster - 2 AFF700 & 2 FAS9000 - When you say recreating cluster - will that impact FAS9000 nodes ?

I have no data on AFF700 , but lot of data is on FAS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

oh.. That does complicate things a bit more then. ignore the wipe comment then. You'd have to remove it from the 4 node correctly and then.

I would engage your NetApp partner at this point, there's lots of parts to this that keep popping up and is probably safer doing that.

To note, without seeing your spare output... I *think* best case, assuming that the the A700 was deployed with 24 drives, you'd only be able to remove 2 drives total.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I see 9 spares on node 1 & 18 spares on node 2.

I was searching for this in google for quite sometime - but was confused , so I started posting here.

Thanks a lot SpindleNinja for your quick response on my queries.

Appreciate it.

Last option would be contacting the Account team.

Thanks again.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

But what type of spares though, Root or Data? That's also to factor in.

And no problem. they'd have a more direct view of the system.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

this is what I see

Original Owner: node 1

Pool0

Root-Data1-Data2 Partitioned Spares - 9 disks

Original Owner: node 2

Pool0

Root-Data1-Data2 Partitioned Spares - 18 disks