Active IQ Unified Manager Discussions

- Home

- :

- Active IQ and AutoSupport

- :

- Active IQ Unified Manager Discussions

- :

- Re: Dynamic Secondary Volume Resize not Working

Active IQ Unified Manager Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a customer who is trying to add a volume to a dataset that uses QSM for backups, but when he adds it to the dataset, the conformance check indicates that it will create a new volume for this relationship on another aggregate in the dataset instead of putting it in the same volume as the other source volume in the dataset. Here is some config info:

Dataset:

SourceVol1=2GB, 495GB used, 0GB snapreserve

Resource pool has 7 aggregates

SourceVol1's aggregate has 3.6TB of space available

SourceVol2, the volume to be added, is 2GB, 450GB used, 0GB snapreserve

When I try to add SourceVol2 it wants to provision a new 2.64TB volume on another aggregate in the resource pool.

Why is it doing this? I want it to put it into the same volume as SourceVol1's backup. Dynamic secondary resize is enabled. dpMaxFanInRatio is 100.

I have enough space in that aggregate. And yes, I'm adding it as a qtree, /vol/SourceVol2/-, not a volume.

So, we manually increased the destination volume of SourceVol1 and it behaves like we want, putting the backup into the same volume as SourceVol1.

It looks as if dpDynamicSecondarySizing is not working for some reason.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Todd,

Dynamic Secondary Sizing / Fan-In happens only when the following conditions are satisfied,

- Maxrelspersecondaryvolume - is not exceeded which is 50 by default.

- PlatformDedupeLimit -- If the volume is dedeup enabled resizing the secondary volume becasue of multiple source volume should still be within the limit.

- Volume Language.--- If the source volume languages are different they can be Fan-Ined to same destination volumes as it causes problems during restore.

The DSS is calculates as follows

PM uses DSS (disabled when upgraded from 3.7)

Projected Secondary Size = 1.1 * maximum [(2.0 * Primary Current Used Size), (1.2 * Primary Volume Total Size)]

1.1 is a fixed value

2.0 is set by an option A

1.2 is set by an option B

If dpMaxFanInRatio is > 1, the primary volume sizes are replaced by the sum of all volumes fanning into the secondary volume.

Rule of thumb

Volume used < 60% then1.32x source volume total size

Volume used > 60% then 2.2x source volume used size

Option Name: dpDynamicSecondarySizing

Hope this helps

Regards

adai

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yup. All the critera is met, but it still is attempting to provision a new volume for the qtree on a different aggregate in the resource pool, eventhough there is plenty of room in the aggregate where we want it to go. However, if we manually resize the volume in which we want this new QSM relationship to reside before we conform it, it puts it there upon conforming. There is no good reason to provision a new volume, like it wants to do. For some reason it will not resize automatically.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Todd,

The other reason could be that when trying to increase(Resize the volume) the over commitment of the containing aggr can exceed which would prevent the volume from re-sizing.Adding some extra logging would tell why exactly its creating a new volume. Also what is the max fan-in ratio ? Would a webex be possible to find out the root cause ?

Regards

adai

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So, looking at the over commit thresholds, It does not appear that this is the issue. Also, we have observed behavior where we have 3 qtrees in a volume to snapmirror and DPM attempts to create 1 volume with 2 qtrees and another volume with 1 qtree all in the same aggregate on the destination. So it does not seem to point to a space issue on the aggregate per se. Do we have the logic for this provisioning proccess documented somewhere? Maybe we can divine from that where and why it is doing this.

Also, dpMaxFanInRatio=100

thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Todd,

Let go a quick webex to see whats happening. BTW what version of DFM are you running ?

Regards

adai

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would, but it is a secured site and no way to do it...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is it possible to run with testpoints and return the logs ? Or even that is not possible ? BTW what is the version of DFM that you are currenlty using.

Regards

adai

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

I have the exact same issue. Was there a solution?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What is your issue ? What version of DFM/OCUM are you running ? What type of relationship are you trying to create ? What is the error message you get.

Regards

adai

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

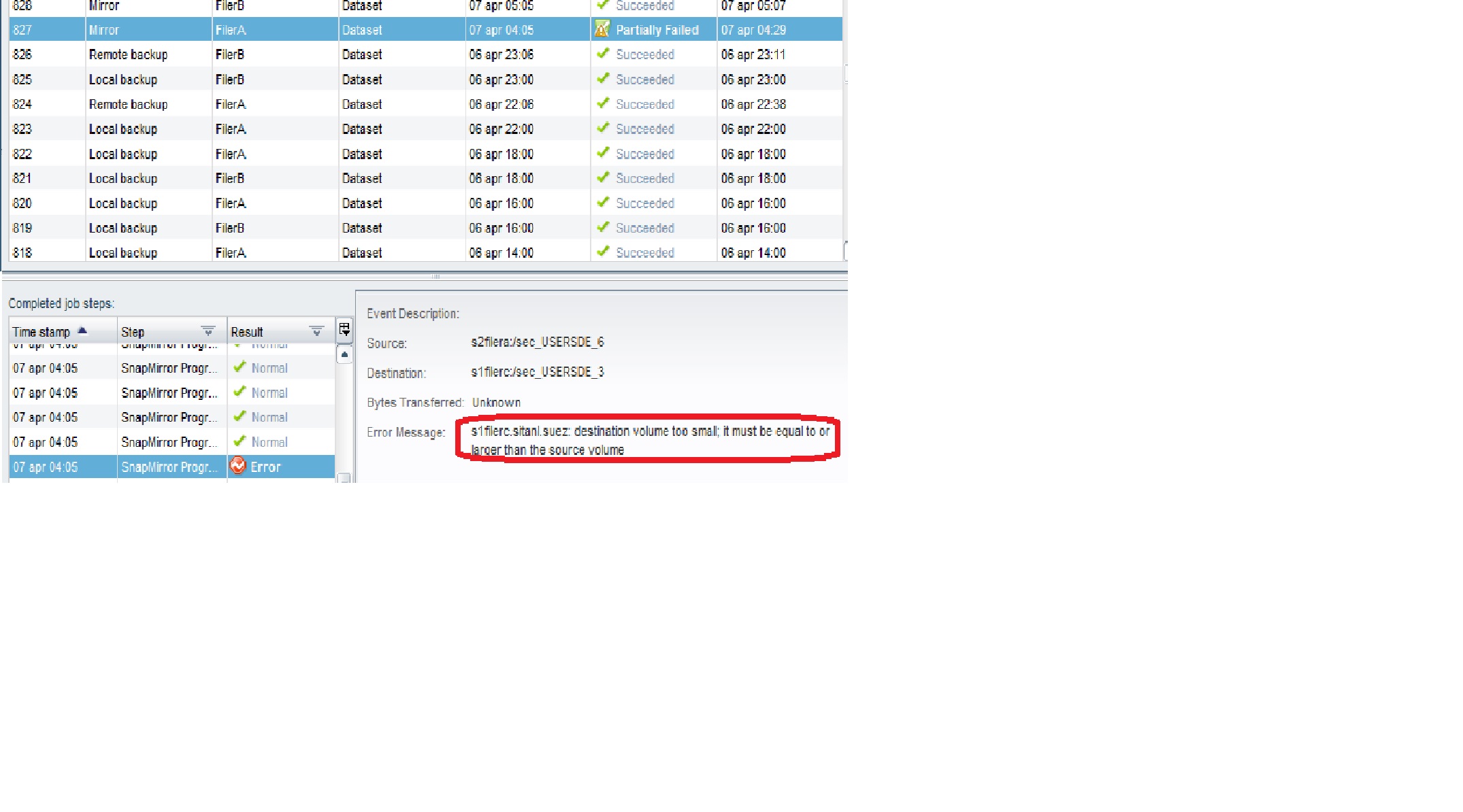

We use DFM Version: 5.1.0.15008 (5.1) and what we are seeing is that volumes are not automatically enlarged by Protection manager in the dataset....

This is the setup:

Primary -> backup -> mirror

Volumes (snapmirrored & snapvault) between primary and backup are automatically enlarged via DSS (you see the msg in the joblog) but when mirroring from backup

to the mirror, it only says that the volume is to small (s1filerc.sitanl.suez: destination volume too small; it must be equal to or larger than the source volume) without taking action.

If you however enlarge it manually by doing a vol size <volume> +xg , the volume just enlarges and the error goes away

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

we run 5.1 and had these problems as well. dss tried to resize volumes to a size the machine cant handle. we disabled it and manually resize.

One workaround was to create a big dummy volume and fill up the Aggregate to a size dss could handle.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Thomas

Well, that's indeed a workaround. Nevertheless, our integrator is looking into it with NetApp and probably we are suffering from a known bug: http://support.netapp.com/NOW/cgi-bin/bol?Type=Detail&Display=677951 (Documented Issue 677951 which is funny because it doesn't have any info) but it's not certain so we are now running diagnostics with DFM and Netapp will investigate. I'll keep this thread updated with the findings.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan,

most bug infos arent public available, there are internal notes tho, hopefuly they work on a fix, but i wont expect it to be included in 5.2 unfortunately.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Thomas,

Can you pls give the output of the following cli in your case which is not working ?

dfpm dataset list -R <dataaset-name-or-id>

Regards

adai

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

PS C:\Users\snapdrive> dfpm dataset list

Id Name Protection Policy Provisioning Policy Application Policy Storage Service

---------- --------------------------- --------------------------- ------------------- --------------------------- ---------------

1138 CUSTOMER_FILESERVICE_SHARES CUSTOMER_FILESERVICE_POLICY

1125 CUSTOMER_FILESERVICE_USER CUSTOMER_FILESERVICE_POLICY

2076 CUSTOMER_VMDS_SAP CUSTOMER_VMDS_SnapVault_and_Mirror_POLICY CUSTOMER_VMDS_SAP_PROD CUSTOMER_VMDS_DEFAULT_BACKUP_SERVICE

2194 CUSTOMER_VMDS_STANDARD CUSTOMER_VMDS_SnapVault_and_Mirror_POLICY CUSTOMER_VMDS_STANDARD CUSTOMER_VMDS_DEFAULT_BACKUP_SERVICE

3231 SnapMgr_Exchange_EXSERVER CUSTOMER_Exchange_SnapVault_only

3371 SnapMgr_SQLServer_SQLSERVER CUSTOMER_Sharepoint_Snapvault_only

PS C:\Users\snapdrive> dfpm dataset list -R 1138

Id Name Protection Policy Provisioning Policy Relationship Id State Status Hours Source Destin

ation

---------- --------------------------- --------------------------- ------------------- --------------- ------------ ------- ----- ---------------------------- ------

----------------------

1138 CUSTOMER_FILESERVICE_SHARES CUSTOMER_FILESERVICE_POLICY 6549 snapvaulted idle 11.0 VFSERVER:/vol_VFSERVER_shares/qt_sh

are01 NETAPP03:/vol_VFSERVER_shares_2/qt_share01

1138 CUSTOMER_FILESERVICE_SHARES CUSTOMER_FILESERVICE_POLICY 6550 snapvaulted idle 11.0 VFSERVER:/vol_VFSERVER_shares/qt_sh

are03 NETAPP03:/vol_VFSERVER_shares_2/qt_share03

1138 CUSTOMER_FILESERVICE_SHARES CUSTOMER_FILESERVICE_POLICY 6551 snapvaulted idle 11.0 VFSERVER:/vol_VFSERVER_shares/qt_sh

are02 NETAPP03:/vol_VFSERVER_shares_2/qt_share02

1138 CUSTOMER_FILESERVICE_SHARES CUSTOMER_FILESERVICE_POLICY 6552 snapvaulted idle 11.0 VFSERVER:/vol_VFSERVER_shares/qt_sh

are04 NETAPP03:/vol_VFSERVER_shares_2/qt_share04

1138 CUSTOMER_FILESERVICE_SHARES CUSTOMER_FILESERVICE_POLICY 6611 snapmirrored idle 6.1 NETAPP03:/vol_VFSERVER_shares_2 GDF

S0004:/vol_VFSERVER_shares_2

PS C:\Users\snapdrive>

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

The same Problem here (Do you have you received a Solution from Netapp in the Meantime?) :

OCUM5.1

Primary-->Snapvault-->Snapmirror

- My Dataset

U:\>dfpm dataset list -R 96671

Id Name Protection Policy Provisioning Policy Relationship Id State Status Hours Source Destination

---------- --------------------------- --------------------------- ------------------- --------------- ------------ ------- ----- ---------------------------- ----------------------------

96671 Dataset1 Standard 96678 snapvaulted idle 9.9 vfilerprod:/vfilerprod_cifs_share2/- vfilerSV:/Standard_backup/cifs_share2

96671 Dataset1 Standard 96680 snapvaulted idle 9.9 vfilerprod:/vfilerprod_cifs_share1/- vfilerSV:/Standard_backup/cifs_share1

96671 Dataset1 Standard 96682 snapvaulted idle 9.9 vfilerprod:/vfilerprod_cifs_share2/dbbackuppf vfilerSV:/Standard_backup/dbbackuppf

96671 Dataset1 Standard 96684 snapvaulted idle 9.9 vfilerprod:/vfilerprod_cifs_share1/dbbackupab1 vfilerSV:/Standard_backup/dbbackupab1

96671 Dataset1 Standard 96688 snapmirrored idle 46.7 vfilerSV:/Standard_backup filerSM:/Standard_mirror

- Snapvault Volume Size reported by DFM Report

U:\>dfm report view volumes-capacity 96674

Object ID Volume Aggregate Storage Server Used Total Used (%)

--------- ----------------------------- --------- ------------------------------- ----------- ----------- --------

96674 Standard_backup aggr3 vfilerSV.domain 10256589560 16066582712 63.8

Totals 10256589560 16066582712 63.8

- Snapvault Volume Size on Controller

filerSV> df -V Standard_backup

Filesystem kbytes used avail capacity Mounted on

/vol/Standard_backup/ 16066582712 10256890328 5809692384 64% /vol/Standard_backup/

/vol/Standard_backup/.snapshot 0 8882897080 0 ---% /vol/Standard_backup/.snapshot

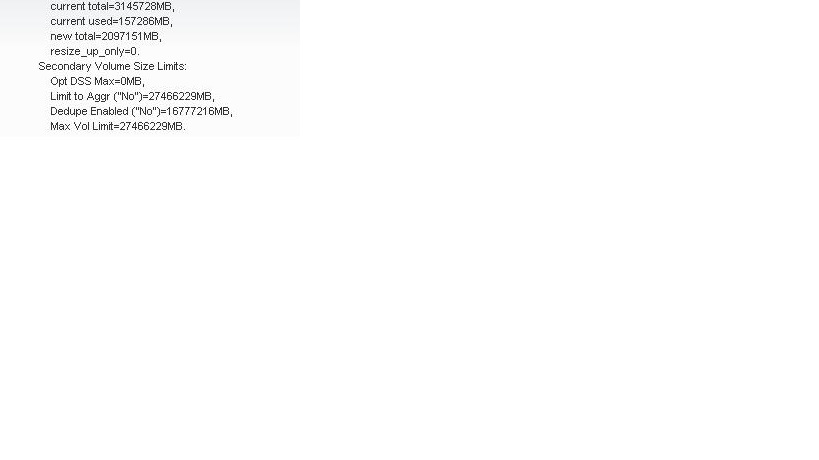

- The Remote Backup DSS Job report a current Total Size of 2TB (instead of 14TB). In the Past Every Job report this 2 TB and resize to 14TB, but on the controller its already 14TB . Fact is DSS read the wrong Value of 2TB every Time DSS runs.

- Snapmirror Volume Size on Controller

filerSM> df -V Standard_mirror

Filesystem kbytes used avail capacity Mounted on

/vol/Standard_mirror/ 15350455768 9670474332 5679981436 63% /vol/Standard_mirror/

/vol/Standard_mirror/.snapshot 0 8288095364 0 ---% /vol/Standard_mirror/.snapshot

- The Mirror Backup DSS Job try to resize to 2TB (I Think its because the wrong 2TB is reported as current Size from Backup DSS Job and the resize fails.Then the snapmirror Job fails because the current Size of the snapmirror Volume is a few Bytes smaller (16066582712-15350455768=716126944B~683MB).

- We have 76 Datasets (Oracle_Snapcreator/SMSQL (Backup only)/CIFS/NFS)and this happen only on 1 Datasets since few Weeks and on 2 other Dataset sporadically.

regards

Thomas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thomas

What did you do before this to get this error. What were you resizing, the primary, secondary, etc.

I am trying to get reproducible steps to take to get this problem. I am a dev for OnCommand trying to fix this issue.

Stephanie

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I'm not Thomas but, I had the same kind of issue on one volume last week (DR Mirror then Backup policy).

One secondary volume had an issue resizing (all other volumes in the dataset worked well), and then the Mirror Job finished with "Some storage members for dataset still not found after running fsmon".

After that, all backup jobs for the dataset failed even for volumes were snapmirror went well (First question: is this normal ?)

What made me answer to your question there is I noticed something on the screen shots above.

The value for new total is the exact same number that I saw on other screenshots in the thread. I don't know if it means something but it seems to me an enormous coincidence, or may be it is a special value ...

In our case, the backups have been running well for weeks/months. On the day before, some qtree were provisionned in the dataset (in the volume that failed) using provisioning manager as it was done tens of times before.

But the night after, Protection Manager failed to resize (I would notice as well, that DFM said it would sized down the mirror, but if failed because it needed a size up since the customer added new qtrees and the primary volumes was growing).

The screenshot:

Regis

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Stephanie

Befor this Error happen I have did following Steps:

- Because an other Issue, I have removed the Mirror Nodes from the dataset.

- Provisioning Manager has made New Mirror Volumes

- Because of Bad calculating the Mirror Size the creation of snapmirror Relationsships has failed

- Over the Hosts/Aggregate/Manage Space I have resized the Backup Node

- After the resizing the Relationship was created successfully, but since Then I Have the Behavior, which I have described above:

- Since I have resize The Mirror Node (over the Controller CLI) to a big Value (20TB) the snapmirror update works and only the Resize Error happen:

regards

Thomas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Had to add some qtrees today and used Provisioning manager. The exact same issue occured I had before and Thomas described , with the same wrong value of 2097151MB in the job.

I will ask the customer to open a case. Is there already a burt ID for this issue ?

I would like to add that may be it's a coincidence but the volume where it occurs is the single biggest volume we have provisionned so far (2994,4 GB Total capacity) and it's the first volume where it occurs.

Regis