ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- Trying to wrap my head around how DNS entries are being created on our cluster

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Trying to wrap my head around how DNS entries are being created on our cluster

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have a cluster that is running OnTap 9.6 P2 and we have DNS servers configured:

Oriole::> dns show

Name

Vserver Domains Servers

--------------- ----------------------------------- ----------------

Oriole omni.domain.com xxx.yyy.zzz.252,

xxx.yyy.zzz.253

oriole-svm omni.domain.com xxx.yyy.zzz.252,

xxx.yyy.zzz.253

2 entries were displayed.

Oriole::>

We didn't realize that the cluster was configured to lookup server names, so we would run this command below before adding a server to an export policy:

Oriole::> vserver services dns hosts create -vserver oriole-svm -address 172.22.15.6 -hostname servername

When I run "dns hosts show" I see lots of entries and have a couple of questions:

1) Why would there be entries in this list for servers that do not currently (nor have ever had) an entry in our domain DNS? In the example below, that is an entry for the MPLS router at a remote office that has no entry on our DNS servers nor did we manually add it to the cluster.

Vserver Address Hostname Aliases ---------- -------------- --------------- ---------------------- oriole-svm xxx.yyy.zzz.114 mpls-rv-cl_side -

2) Why are there entries in this list that have both a "hostname" and an "Alias" when we always just added devices with a hostname? Is the cluster populating the alias field of a matching hostname based on what it finds on the DNS servers (in the code below, only the "hostname" was added to the cluster via the command above; how did the alias get populated)?

Oriole::> dns hosts show Vserver Address Hostname Aliases ---------- -------------- --------------- ---------------------- oriole-svm xxx.yyy.zzz.134 app02 app02.domain.com

3) Is there a way to automatically purge entries from the host list of servers that don't have entries in our domain DNS?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I guess your observation is correct and expected.

For example:

In my environment:

When I setup CIFS I added one DC, but when I ran vserver cifs domain discovered-servers show, it showed up many servers (We have about 10 DCs, but it showed up 18). Some servers are repeated b'cos they have multuple functions.

Reason behind this is : Domain Controller Discovery Process triggered by (SecD) ONTAP

What it does : It is an automatic procedure triggered by Security Daemon (SecD) : Dynamic server discovery is used by ONTAP for discovering Domain Controller's (DC's) and their associated services, such as LSA, NETLOGON, Kerberos and LDAP. It discovers all the DC's, including preferred DC's, as well as all the DC's in the local site and all remote DC's also. No wonder you are seeing so many of them been discovered.

Starting 9.3, the discovery behavior was changed:

=========================================

A new option ' discovery-mode' is added under the command directory vserver cifs domain discovered-servers to control server discovery.

site - Only DC's in local site will be discovered.

none - Server discovery will not be done, and it will depend only on preferred DC's configured.

You can use 'vserver active-directory discovered-servers reset-servers' command to discard stored information about LDAP servers and domain controllers. After discarding server information, the SVM reacquires current information about these external servers. This can be useful when the connected servers are not responding appropriately.

If you have access to NetApp KB site, you view this article:

What is Domain Controller Discovery?

https://kb.netapp.com/app/answers/answer_view/a_id/1076594

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What I am talking about is something different. The results of "vserver services dns hosts show" is the list of servers/network devices that have either manually had an entry made in the local DNS host list using the command "vserver services dns hosts create -vserver cardinal-svm -address 172.22.11.1 -hostname servername" and servers (not necessarily DNS servers) that the cluster somehow knows about even though we didn't manually run a command to enter them into the list nor do they have an entry registered in our domain DNS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

what are those network devices entry you mentioned ? What do they do ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Some are URLs of websites that we host, some are routers at remote sites, some are iLO service processors of servers. (none of those items uses storage hosted on the cluster nor ever manually had a DNS entry created on the cluster)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ahh..I get what you mean, could this be the reason...

Devices auto-discovered and added into the host entries :

Can you check if this option is set on your cluster:

Starting from Data ONTAP 8.2, CDP is enabled by default

::> options cdpd*

Basically, any network device which supports the Industry Standard Discovery Protocol (ISDP) or CDPD can be auto discovered by Data ONTAP. The auto-discovered network devices include not only switches, but they can also be a host.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Oddly, on Cluster #1 the setting is enabled.

Oriole::> run -node Oriole-0* options cdpd * 4 entries were acted on. Node: Oriole-01 Setting invalid option cdpd failed. cdpd.enable on (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover) Node: Oriole-02 Setting invalid option cdpd failed. cdpd.enable on (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover) Node: Oriole-03 Setting invalid option cdpd failed. cdpd.enable on (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover) Node: Oriole-04 Setting invalid option cdpd failed. cdpd.enable on (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover)

On Cluster #2, the option is not enabled but I see the same devices when I run "vserver services dns hosts show". The clusters are peered, so could that be the reason I see the entries on both even though CDP is disabled?

Cardinal::*> run -node Cardinal-01 options cdpd * Setting invalid option cdpd failed. cdpd.enable off (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover) Cardinal::*> run -node Cardinal-0* options cdpd * 4 entries were acted on. Node: Cardinal-01 Setting invalid option cdpd failed. cdpd.enable off (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover) Node: Cardinal-02 Setting invalid option cdpd failed. cdpd.enable off (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover) Node: Cardinal-03 Setting invalid option cdpd failed. cdpd.enable off (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover) Node: Cardinal-04 Setting invalid option cdpd failed. cdpd.enable off (value might be overwritten in takeover) cdpd.holdtime 180 (value might be overwritten in takeover) cdpd.interval 60 (value might be overwritten in takeover)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interesting case to be honest, peered cluster are connected via IC LIF, now whether they can be polled via that is another question.

However, apart from cdpd [cisco discovery protocol for cisco connected devices) there is also a industry standard protocol which could also be polled. So I am guessing this what it is all about.

Can I know about your FILER Model & Ontap version ? Have you upgraded the Ontap in recent times, if you could let me know all the versions you have upgraded to the latest.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Each cluster consists of 2 x AFF8040s and 2 x FAS8200s. We are currently on 9.6P2 on both clusters (not sure when this issue acutally started).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ok..FAS8040s existed since 8.3, and FAS8200 shipped with 9.2 I think:

Could you check, what you see via this command on both clusters:

::> network port show -fields remote-device-id

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The clusters are at two different sites. Each cluster has two CN1610 cluster switches configured.

Results from the cluster where cpdp is off

Cardinal::> network port show -fields remote-device-id node port remote-device-id ----------- ---- ---------------- Cardinal-01 e0M - Cardinal-01 e0a CN1610-ST-01 Cardinal-01 e0b - Cardinal-01 e0c CN1610-ST-02 Cardinal-01 e0d - Cardinal-01 e0e - Cardinal-01 e0f - Cardinal-01 e0g - Cardinal-01 e0h - Cardinal-01 e0i - Cardinal-01 e0j - Cardinal-01 e0k - Cardinal-01 e0l - Cardinal-01 e0l-206 - Cardinal-01 e1a - Cardinal-01 e1b - Cardinal-01 e1c - Cardinal-01 e1d - Cardinal-01 e3a - Cardinal-01 e3b - Cardinal-02 e0M - Cardinal-02 e0a CN1610-ST-01 Cardinal-02 e0b - Cardinal-02 e0c CN1610-ST-02 Cardinal-02 e0d - Cardinal-02 e0e - Cardinal-02 e0f - Cardinal-02 e0g - Cardinal-02 e0h - Cardinal-02 e0i - Cardinal-02 e0j - Cardinal-02 e0k - Cardinal-02 e0l - Cardinal-02 e0l-206 - Cardinal-02 e1a - Cardinal-02 e1b - Cardinal-02 e1c - Cardinal-02 e1d - Cardinal-02 e3a - Cardinal-02 e3b - Cardinal-03 e0M - Cardinal-03 e0a CN1610-ST-01 Cardinal-03 e0b CN1610-ST-02 Cardinal-03 e0c - Cardinal-03 e0d - Cardinal-03 e0e - Cardinal-03 e0f - Cardinal-03 e0g - Cardinal-03 e0h - Cardinal-03 e1a - Cardinal-03 e1b - Cardinal-04 e0M - Cardinal-04 e0a CN1610-ST-01 Cardinal-04 e0b CN1610-ST-02 Cardinal-04 e0c - Cardinal-04 e0d - Cardinal-04 e0e - Cardinal-04 e0f - Cardinal-04 e0g - Cardinal-04 e0h - Cardinal-04 e1a - Cardinal-04 e1b - 62 entries were displayed.

Results from the cluster where cpdp is on

Oriole::> network port show -fields remote-device-id node port remote-device-id --------- ---- ---------------- Oriole-01 e0M - Oriole-01 e0a CN1610-BTP-01 Oriole-01 e0b - Oriole-01 e0c CN1610-BTP-02 Oriole-01 e0d - Oriole-01 e0e - Oriole-01 e0f - Oriole-01 e0g - Oriole-01 e0g-240 - Oriole-01 e0h - Oriole-01 e0i - Oriole-01 e0j - Oriole-01 e0k - Oriole-01 e0l - Oriole-01 e0l-223 - Oriole-01 e1a - Oriole-01 e1b - Oriole-01 e1c - Oriole-01 e1d - Oriole-01 e3a - Oriole-01 e3b - Oriole-02 e0M - Oriole-02 e0a CN1610-BTP-01 Oriole-02 e0b - Oriole-02 e0c CN1610-BTP-02 Oriole-02 e0d - Oriole-02 e0e - Oriole-02 e0f - Oriole-02 e0g - Oriole-02 e0g-240 - Oriole-02 e0h - Oriole-02 e0i - Oriole-02 e0j - Oriole-02 e0k - Oriole-02 e0l - Oriole-02 e0l-223 - Oriole-02 e1a - Oriole-02 e1b - Oriole-02 e1c - Oriole-02 e1d - Oriole-02 e3a - Oriole-02 e3b - Oriole-03 e0M - Oriole-03 e0a CN1610-BTP-01 Oriole-03 e0b CN1610-BTP-02 Oriole-03 e0c - Oriole-03 e0d - Oriole-03 e0e - Oriole-03 e0f - Oriole-03 e0g - Oriole-03 e0h - Oriole-03 e1a - Oriole-03 e1b - Oriole-04 e0M - Oriole-04 e0a CN1610-BTP-01 Oriole-04 e0b CN1610-BTP-02 Oriole-04 e0c - Oriole-04 e0d - Oriole-04 e0e - Oriole-04 e0f - Oriole-04 e0g - Oriole-04 e0h - Oriole-04 e1a - Oriole-04 e1b - 64 entries were displayed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

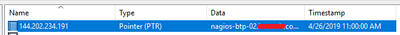

Coming back to this as I'd really like to find out if there is a solution to automatically updating these entries. I just noticed this one for instance:

- We never manually added that server to the list

- A different server (nagios-btp-02) has been using that IP for months but the entry on the cluster didn't change

- The two DNS servers that the cluster is configured to hit only have entries for the new server

Is there any way that we can force the cluster to update all of the automatically discovered entries?