VMware Solutions Discussions

- Home

- :

- Virtualization Environments

- :

- VMware Solutions Discussions

- :

- NetApp iSCSI best configuration?

VMware Solutions Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello ...

I own 3 esx servers 4, connected to a NetApp 2020th cabin, dual controller active / active. Each controller has 2 interfaces (e0a and e0b)

NetApp reading the documentation, have two connection options: Using LACP, or by using standard software interfaces with the MPIO iscsi vmware, creating 2 or more vmkernel ports.

I opted to use the second option, spending 2 physical cards in each server to connect esx iscsi. I created a vSwitch for iSCSI, with 2 vmkernel port.

without using an adapter in each case. ( Unused Adapter option).

On the side of the cabin, I configured the interface of each controller e0a with e0a interface of the other controller as its partnet, plus you have assigned a second IP address as an alias. So that I have e0a controller "A", with IP 192.168.1.17 and 192.168.1.40 alias; e0b controller "A" withIP 192.168.2.17 and 192.168.2.40 alias; e0a controller "B "IP 192.168.1.18 with and without alias e0b controller" B "with IP 192.168.2.18, and without alias.

Now, in the iscsi initiator configuration vmware , add only the "Dynamic discovery" IP 192.168.1.17, and I appear on the tab "static discovery", the four IP addresses.

Once you add this, each datastore I get with 4 paths available.:

If I add more IP addresses "alias", the "paths" multiply, also happens if I add more vmkernel vswitch port to ....

I have the possibility of adding to each esx server 2 more cards for iscsi vsiwtch of adding 2 to each server vmkernel port .... good idea or not ?....

Thank´s...

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks ok and seems to be inline with the best practices. Have you also enabled the vmhba to load balance traffic accross the iscsi adapter in the esx server this way it will distribute the load accross each vmkernel port rather than only one inbound:

esxcli --server hostname swiscsi nic add -n vmk1 -d vmhbaxx

esxcli --server hostname swiscsi nic add -n vmk2 -d vmhbaxx

List

esxcli --server hostname swiscsi nic list --adapter vmhbaxx

The behaviour is expected if you add additional ip alias on the netapp vif as the esx will see this as another interface this goes for the vm kernel ports too. Adding more vmkernel ports will only be required if aggrgate bandwidth is increases.

Do a cf takover an giveback and make sure the paths are still active during that process.

Technocis

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks ok and seems to be inline with the best practices. Have you also enabled the vmhba to load balance traffic accross the iscsi adapter in the esx server this way it will distribute the load accross each vmkernel port rather than only one inbound:

esxcli --server hostname swiscsi nic add -n vmk1 -d vmhbaxx

esxcli --server hostname swiscsi nic add -n vmk2 -d vmhbaxx

List

esxcli --server hostname swiscsi nic list --adapter vmhbaxx

The behaviour is expected if you add additional ip alias on the netapp vif as the esx will see this as another interface this goes for the vm kernel ports too. Adding more vmkernel ports will only be required if aggrgate bandwidth is increases.

Do a cf takover an giveback and make sure the paths are still active during that process.

Technocis

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello....

Yes, I actually enabled vmhba.....

And about what to add more cards to the vmkernel, and the possible overloading of the cab iscsi connections ....?

taking into account that 3 esx server´s.....

Thank´s....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

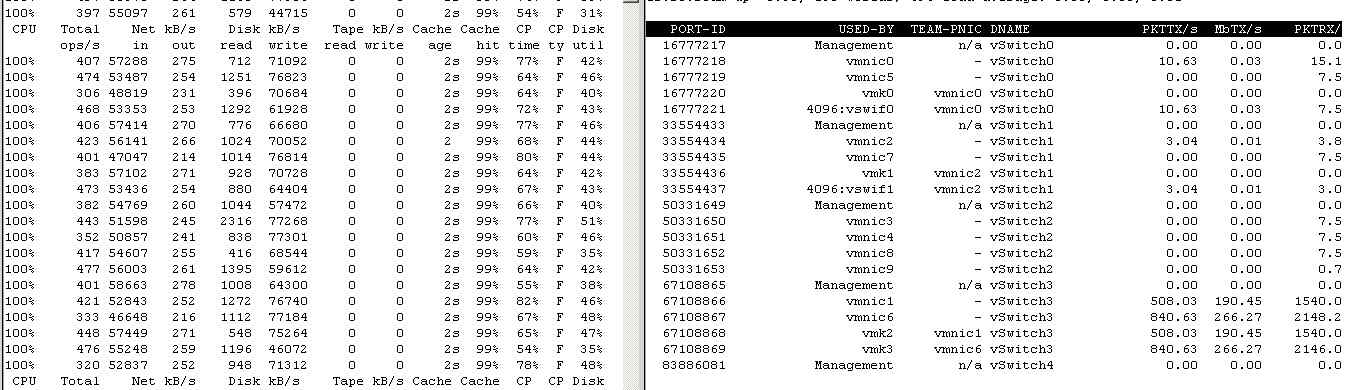

statistics and performance

Hello again ....

I'm in the testing phase, and do not know if the results I get are good or bad.

The only load in the tests is to make a clone of a virtual machine hosted on a local disk of ESX server, to the cabin FAS2020,graphics and get the following measures:

In the section mbtx / esxtop command s (n), the vmk2 and vmk3 (iscsi), not exceeding 160 Mb .. normal is ?.....

In the graphs of NetApp protocol latency around 5 ms ...

The cabin has 13 SAS 15 k rpm disks.

Thank.s...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In the graphs of NetApp protocol latency around 5 ms ...

It it very good - anything below 15-20ms is usually acceptable.

Regards,

Radek

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you ...

But what about the network performance on the cards ?..... should be no more ?....

Thank you ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is a bit tricky question.

The stats only show that you are not maxing out the link, so the limiting factor could be your ESX host itself, as it does data shifting from one place to another.

CPU utilisation on the filer is fairly high, but still there is some headroom I believe.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think you will want to watch CPU utilization and latency closer than bandwidth in this set up.Running switches, especially gig or 10gig in software can put a lot of load on a general purpose CPU. The same is true on the filer side since a 2xxx series box does not have the same horsepower as some of the bigger systems. Not that it will be your bottleneck but it's certainly something to watch.

But #1 stat in my opinion is the latency for disk access. Watching this on your VMs as well as on the filer itself will give you a great idea of what's going on in the storage subsystem as a whole.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello ...

First thank you very much for answering all ...

Jeremypage, sorry but I do not understand what follows ... I'm Spanish, and I use google translator .....and do not understand what you're saying....

I understand that the Model 2020 is a low performance, but in this test, 13 15000 rpm SAS disks, 1 single server, and a single copy process to the cabin .... should not yield better results? ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am saying it is more likely the bottleneck is on the vSwitch/VMKernel connection, not the filer.

What happens when you perform similar tests with a normal (non-VM) iSCSI client?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello jeremypage.....

I can not have a windows machine to connect and do the tests .... But I made the following test:

From the same ESX server, and with the same vswitch / vmkernel, I connected to LUN 1 of controller "A" of the FAS2020. Then, i run the following command to create a heavy load in the cabin ( time vmkfstools -c 12G -d eagerzeroedthick test.vmk ). The result is the graph 1.

Then, I connected to another LUN (2) of the same controller, with the same result of the gragh 1.

But then, I have connected to other LUN (3) of the other controller "B" ( the fas2020 is model active/active), and the result was multiplied by 2. Graph 2.

In both cases the CPU is at 100 %.... may be that the reason ?....

thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry for the late response, it's been busy around here. It *could* be the CPU, what does "sysstat -m" show?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello again....

In a vmware document states: "" When You Set up multipathing iSCSI HBAs and Between Two multiple ports on a NetApp storage system, givethe two HBAs or static discovery Different dynamic addresses to connect to the storage.

The NetApp Storage System Permits only one connection for each 'Each target and initiator. Attempts to make additional connections cause thefirst connection to drop.

On the other hand, the fas 2020, I set the parameter "iscsi.max_conneections_per_session" to 32 (the maximum), and instead, the command

iscsi show -p iscsi session tells me that in each session the "max connection" is 1

Also, if you generate load in the cabin, the session iscsi-v command shows me the message again and again "Seq / xxx "..... Scsidb_RD_WaitingBurst

Is all this normal? or I have to set some additional parameters? ...