Active IQ Unified Manager Discussions

- Home

- :

- Active IQ and AutoSupport

- :

- Active IQ Unified Manager Discussions

- :

- Re: difference of "qos_ops" and "total_ops"

Active IQ Unified Manager Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

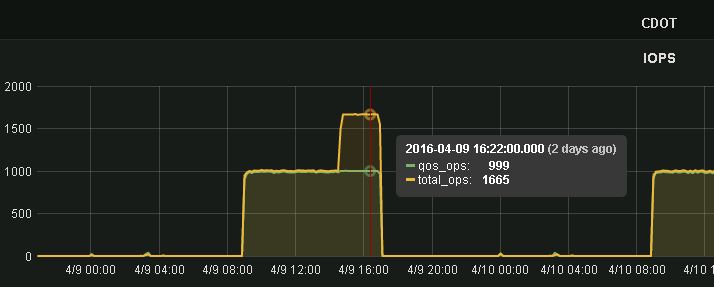

Im usng OnCommand Perfomance Manager and NetApp Harvest for watch performance.

and I found two mertic about total IOPS, "qos_ops" and "total_ops".

but sometimes, qos_ops is different from total_ops!

when I limit volume to 1000IOPS, qos_ops is 1000IOPS but total_ops is over 1000(like 1600IOPS)

what is difference of "qos_ops" and "total_ops"?

thank you.

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @paso

Unfortunately the 'other_ops' bucket is a catch-all counter regardless of requester. There are other counters at the volume level like 'nfs_other_ops' that could be used to see if these are caused by nfs, and the same exist for other protocols, but if the work is not protocol related then we don't have a further breakdown.

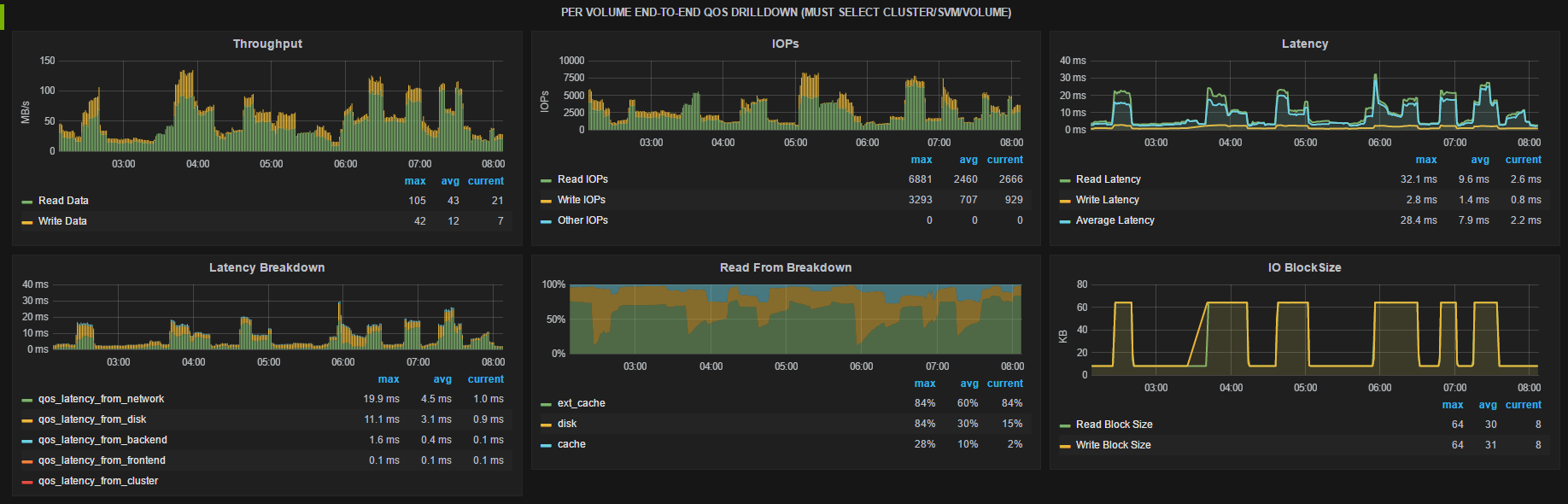

One feature I really like with cDOT is the views enabled by QoS counters which are labeled "latency_from_*" in Grafana. If you have a problem with perf on a single volume I would check the "NetApp Detail: Volume" dashboard, pick from the template the group/cluster/svm/volume, and then look at the following row:

From here you will see the overall workload characteristics (throughput, IOPs, latency), understand it's use of cache, and see what layer the latency is coming from.

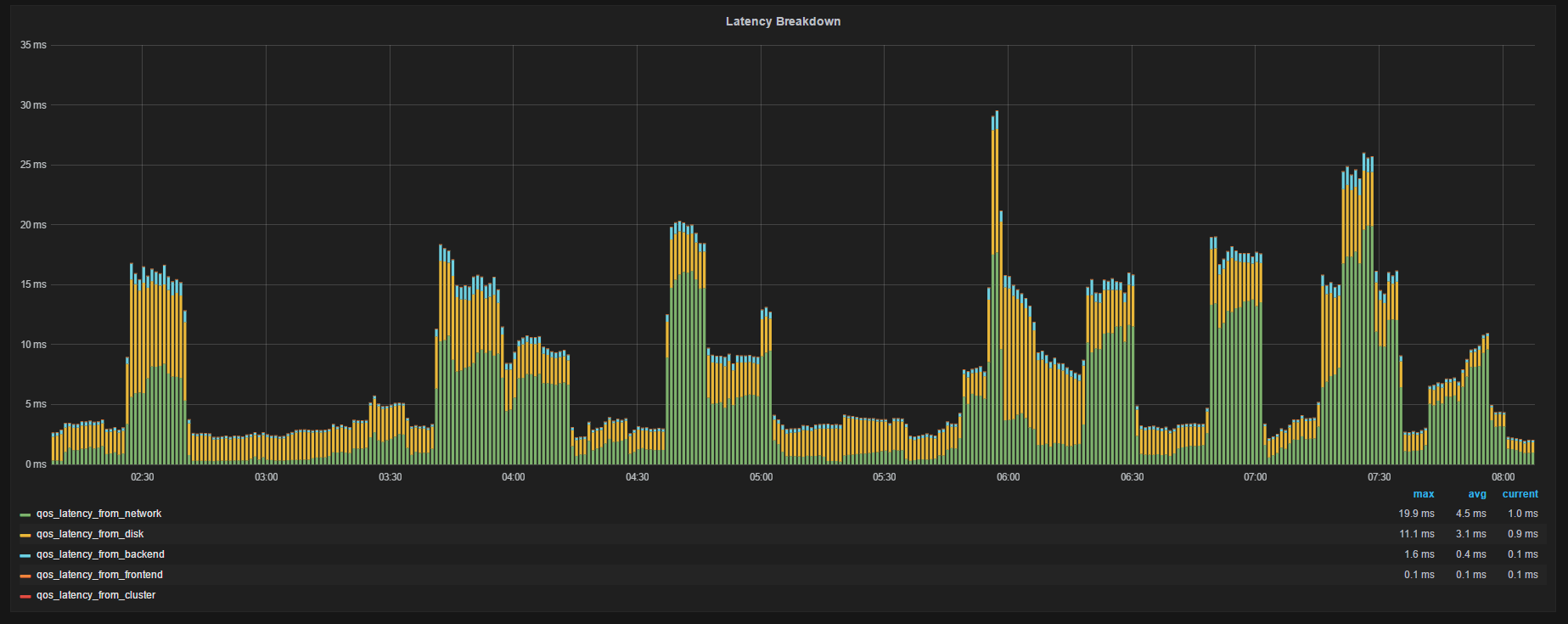

Zooming in on the Latency Breakdown:

Each graph shows the avg latency breakdown for IOs by component in the data path where the 'from" is:

• Network: latency from the network outside NetApp like waiting on vscan for NAS, or SCSI XFER_RDY (which includes network and host delay, here for an example of a write) for SAN

• Throttle: latency from QoS throttle

• Frontend: latency to unpack/pack the protocol layer and translate to/from cluster messages occuring on the node that owns the LIF

• Cluster: latency from sending data over the cluster interconnect (the 'latency cost' of indirect IO)

• Backend: latency from the WAFL layer to process the message on the node that owns the volume

• Disk: latency from HDD/SSD access

So I can see at times I had nearly 30ms avg latency (around 0600) at at that moment the largest contributor was network. Maybe doing the same for your trouble volume will yield some direction on where to investigate. If you still need to go deeper the next step would be to collect a perfstat and open a support case asking the question and referencing the perfstat. For the perfstat I would recommend 3 interations of 5 minutes each while the problem is occurring. Perfstat has more diagnostic info than Harvest collects

Hope this helps!

Cheers,

Chris Madden

Storage Architect, NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @paso,

Good question! total_ops tracks WAFL operations while qos_ops tracks protocol operations. WAFL IOs have a max size of 64KB but client IOs can be much larger. So if a 128KB protocol IO arrives it will cause 2 x 64KB WAFL IOs and you will see the counters deviate. Both qos and normal volume counters can be interesting depending on your use case. QoS ones are measuring from the frontend client perspective and can include network delay from a lossy network or busy host, latency introduced intentionally by QoS throttles, and are per client IO size which for big IOs can confuse things. The non qos_ volume counters are measuring within WAFL and give you health of the storage system and are capped at 64KB.

Hope that helps and if you have a follow-on question fire away!

Cheers,

Chris Madden

Storage Architect, NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thank you for your answer!

and sorry for my easy english.

I understood that, We should watch non qos_ value if we want to know system pef.

if our customer wants to know their volume pef, we should check qos_ value.

But, I dont know NFS that with over64k block size.(We dont use protocol except NFS)

for instance, can "other ops" be big blocksize??

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @paso

Yes, qos_ values will be closer to what the customer sees while the non-qos values will show internal values.

For latency the qos_ counters can include network latency, QoS throttling, frontend node CPU that owns the LIF, and are for the IO size of the client. The non-qos latency counters measure inside the system, so the backend node CPU that owns the volume and wait time for accessing the disk. So qos latency should always be more than the non-qos latency, but usually just by a little bit.

For ops the qos_ counters include the client perspective as well, so a 5 x 256 KB ops will be 5 ops. The non-qos counters would be internal max of 64KB so it would be 20 ops.

For NFSv4 (and 4.1) other ops I can also imagine they might be different because NFSv4 has the concept of compound operations. A compound operation allows the client to batch ops, so in NFSv3 to read a file you might have LOOKUP, GETATTR, OPEN, SETATTR, CLOSE operations individually but in NFSv4 these could be batched in a single compound operation. So for qos it might report 1, while for non-qos it might report 5. I'm not sure on this one but could be. Do you see the difference in IOP count for other ops?

Cheers,

Chris Madden

Storage Architect, NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you very much.

study for me.

>but in NFSv4 these could be batched in a single compound operation.

but We use NFSv3

>I'm not sure on this one but could be. Do you see the difference in IOP count for other ops?

Yes, and I found detail data.

I thought that, 'getattr' or 'lookup_total' or 'remove' OPS has double WAFL count 😉

thank you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @paso

I think volume 'other' ops might also include backend ops from things like the deduplication, snapmirror, reallocation, etc. Were there any other activities on the volume that you can correlate with the timing?

Cheers,

Chris Madden

Storage Architect, NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>Were there any other activities on the volume that you can correlate with the timing?

No there werenot. We dont use dedupe, snapmirror run midnight, and this graph is same everyday.

How can I check these system activities??

sometimes, some customer that use many other IOPS say the volume is not deliver performance..![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @paso

Unfortunately the 'other_ops' bucket is a catch-all counter regardless of requester. There are other counters at the volume level like 'nfs_other_ops' that could be used to see if these are caused by nfs, and the same exist for other protocols, but if the work is not protocol related then we don't have a further breakdown.

One feature I really like with cDOT is the views enabled by QoS counters which are labeled "latency_from_*" in Grafana. If you have a problem with perf on a single volume I would check the "NetApp Detail: Volume" dashboard, pick from the template the group/cluster/svm/volume, and then look at the following row:

From here you will see the overall workload characteristics (throughput, IOPs, latency), understand it's use of cache, and see what layer the latency is coming from.

Zooming in on the Latency Breakdown:

Each graph shows the avg latency breakdown for IOs by component in the data path where the 'from" is:

• Network: latency from the network outside NetApp like waiting on vscan for NAS, or SCSI XFER_RDY (which includes network and host delay, here for an example of a write) for SAN

• Throttle: latency from QoS throttle

• Frontend: latency to unpack/pack the protocol layer and translate to/from cluster messages occuring on the node that owns the LIF

• Cluster: latency from sending data over the cluster interconnect (the 'latency cost' of indirect IO)

• Backend: latency from the WAFL layer to process the message on the node that owns the volume

• Disk: latency from HDD/SSD access

So I can see at times I had nearly 30ms avg latency (around 0600) at at that moment the largest contributor was network. Maybe doing the same for your trouble volume will yield some direction on where to investigate. If you still need to go deeper the next step would be to collect a perfstat and open a support case asking the question and referencing the perfstat. For the perfstat I would recommend 3 interations of 5 minutes each while the problem is occurring. Perfstat has more diagnostic info than Harvest collects

Hope this helps!

Cheers,

Chris Madden

Storage Architect, NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thank you for your reply!

I checked Latency Breakdown on grafana,

and undorstood the latency come from QoS Limit.

I like Harvest&grafanna.

It's useful for maintain reliability of our cloudservice.

thank you very much!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Chris

First of all, great tool! I have been using this to monitor our client's environment with petabytes of netapp storage. very informational.

re. the latency_from_xxxx on volume QoS latency From drilldown. we're on cDOT 8.3. I can see these are collected from the workload_details_volume section in the template. and the counters are litteraly latency_from_xxxx however, I don't see those counters from ONTAP statistics catalog show counter -object ...or did I miss something obvious?

My question is what counters we are using to calculate each section?

In our environmnet, we don't get any value on lantency_from_network. There are no data in graphite hence there is nothing selected in the Metrics in grafana dashboard.

Thanks

Lisa

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @lisa5

The 'latency_from_XXX' data comes from the workload_detail and workload_detail_volume object types.

You can use native ONTAP statistics commands to get an instance list, but you can also use the perf-counter-utility from Harvest like this:

# /opt/netapp-harvest/util/perf-counters-utility -host 10.1.1.2 -user admin -pass secret -f workload_detail_volume -in | grep asp_nfs_01

asp_nfs_01-wid7807.CPU_dblade: mt_stc4010:kernel:asp_nfs_01-wid7807.CPU_dblade

asp_nfs_01-wid7807.CPU_dblade_background: mt_stc4010:kernel:asp_nfs_01-wid7807.CPU_dblade_background

asp_nfs_01-wid7807.CPU_exempt: mt_stc4010:kernel:asp_nfs_01-wid7807.CPU_exempt

asp_nfs_01-wid7807.CPU_nblade: mt_stc4009:kernel:asp_nfs_01-wid7807.CPU_nblade

asp_nfs_01-wid7807.CPU_nblade: mt_stc4010:kernel:asp_nfs_01-wid7807.CPU_nblade

asp_nfs_01-wid7807.CPU_network: mt_stc4009:kernel:asp_nfs_01-wid7807.CPU_network

asp_nfs_01-wid7807.CPU_network: mt_stc4010:kernel:asp_nfs_01-wid7807.CPU_network

asp_nfs_01-wid7807.CPU_raid: mt_stc4010:kernel:asp_nfs_01-wid7807.CPU_raid

asp_nfs_01-wid7807.CPU_wafl_exempt: mt_stc4010:kernel:asp_nfs_01-wid7807.CPU_wafl_exempt

asp_nfs_01-wid7807.DELAY_CENTER_CLUSTER_INTERCONNECT: mt_stc4009:kernel:asp_nfs_01-wid7807.DELAY_CENTER_CLUSTER_INTERCONNECT

asp_nfs_01-wid7807.DELAY_CENTER_DISK_IO: mt_stc4010:kernel:asp_nfs_01-wid7807.DELAY_CENTER_DISK_IO

asp_nfs_01-wid7807.DELAY_CENTER_NVLOG_TRANSFER: mt_stc4010:kernel:asp_nfs_01-wid7807.DELAY_CENTER_NVLOG_TRANSFER

asp_nfs_01-wid7807.DELAY_CENTER_WAFL_SUSP_CP: mt_stc4010:kernel:asp_nfs_01-wid7807.DELAY_CENTER_WAFL_SUSP_CP

asp_nfs_01-wid7807.DELAY_CENTER_WAFL_SUSP_OTHER: mt_stc4010:kernel:asp_nfs_01-wid7807.DELAY_CENTER_WAFL_SUSP_OTHER

asp_nfs_01-wid7807.DISK_HDD_n2_sata_68723b59-6f63-470c-b4df-91e66c72592b: mt_stc4010:kernel:asp_nfs_01-wid7807.DISK_HDD_n2_sata_68723b59-6f63-470c-b4df-91e66c72592bIn there you will see the service center "DELAY_CENTER" names. Harvest then renames them to be more friendly, and if you search the code you'll find this mapping:

DELAY_CENTER_DISK_IO = latency_from_disk DELAY_CENTER_QOS_LIMIT = latency_from_throttle

DELAY_CENTER_CLUSTER_INTERCONNECT = latency_from_cluster

CPU_nblade = latency_from_frontend

CPU_dblade = latency_from_backend

DELAY_CENTER_NETWORK = latency_from_network DELAY_CENTER_WAFL_SUSP_OTHER = latency_from_suspend

I will warn you though that calculating these is non-trivial and best left to Harvest 🙂

If you are at the CLI I would try this one to see latency breakdown:

blob1::> qos statistics workload latency show Workload ID Latency Network Cluster Data Disk QoS NVRAM --------------- ------ ---------- ---------- ---------- ---------- ---------- ---------- ---------- -total- - 5.95ms 81.00us 2.00us 89.00us 5.74ms 0ms 33.00us User-Default 2 5.95ms 81.00us 2.00us 89.00us 5.74ms 0ms 33.00us

FYI, I believe in this qos statistics output the network is actually 'frontend' in harvest.

Anyway, getting back to your question, in Harvest latency_from_network is only populated if ONTAP can 'see' this latency. For SAN protocols with larger block sizes there will be multiple transfers and acks needed and while waiting on the client ack we attribute that latency to network. In NAS you will only see latency here if you are using fpolicy or vscan (I think these show here!) so maybe that explains why it's blank in your case.

Hope this helps!

Cheers,

Chris Madden

Solution Architect - 3rd Platform - Systems Engineering NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO or both!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Appreciated your detailed reply!

I guessed some of them are corresponding to the DELAY_LATENCY_xxxx counters by name. your explanation made it total clear now

We do have vscan. and average scan latency is around 100ms. but strangely there is no latency recorded under latency_from_network. no latency_from_network.wsp file in graphite folder.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @lisa5

After checking with engineering I learned that as of ONTAP 9.1 QOS DELAY_CENTER_NETWORK does not track work related to vscan or fpolicy. The overall QoS latency statistics will include the processing time but it won't show up in the breakdown (i.e. latency_from counters in Graphite). Sorry for my incorrect statements in earlier posts.

I entered two RFEs which you can reference with support or your account team if you desire this feature:

1082732 - "Update QOS DELAY_CENTER_NETWORK to include vscan visits & latency"

1082734 - "Update QOS DELAY_CENTER_NETWORK to include fpolicy visits & latency"

Cheers,

Chris Madden

Solution Architect - 3rd Platform - Systems Engineering NetApp EMEA (and author of Harvest)

Blog: It all begins with data

If this post resolved your issue, please help others by selecting ACCEPT AS SOLUTION or adding a KUDO or both!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the breakdown of the metrics.

One I can't find a definition for however, "Latency from Suspend". What is this measuring?