Microsoft Virtualization Discussions

- Home

- :

- Virtualization Environments

- :

- Microsoft Virtualization Discussions

- :

- Can NetAppDocs be enhanced to include FabricPool ObjectStore data at the volume level?

Microsoft Virtualization Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Can NetAppDocs be enhanced to include FabricPool ObjectStore data at the volume level?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Vserver : vs1

Volume : vol1

Feature Used Used%

-------------------------------- ---------- -----

Volume Data Footprint 2.24TB 2% <----Need this for sure

Footprint in Performance Tier 486.4GB 21% <----Need this for sure

Footprint in ObjectStore1 1.76TB 79% <-----Need this for sure

Volume Guarantee 0B 0%

Flexible Volume Metadata 14.99GB 0%

Deduplication 15.54GB 0%

Cross Volume Deduplication 6.66GB 0%

Delayed Frees 1.78GB 0%

Total Footprint 2.27TB 2%

This would be a tremendous help to manage our environment and would help any NetApp SMC or other PS assisting a customer that is using FabricPool.

Please let me know if you have any questions or feedback on feasibility.

Thanks in advance!

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Mike,

I added this into NetAppDocs 4.0.0 for live data collections and I've just added the information for ASUP collected data (to be released in 4.1.0). I'm assuming you are pulling the data from live systems? If so, 4.0.0 is available on the Tools download site now (NetApp Support Site - All Tools - NetAppDocs). Please let me know if you have any issues with these new columns and also if you want any of the other related columns added (I didn't figure the others were that useful):

VolumeBlocksFootprint

VolumeBlocksFootprintPercent

VolumeBlocksFootprintBin0

VolumeBlocksFootprintBin0Percent

VolumeBlocksFootprintBin1

VolumeBlocksFootprintBin1Percent

TotalFootprint

TotalFootprintPercent

Thanks,

Jason

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

You can also run command "volume show-footprint" against a volume instead of an aggregate to get more specific detail, but the output against a volume is already what you provided.

See my example below:

::> volume show-footprint -volume fabricpool_test

Vserver : vserver_test

Volume : fabricpool_test

Feature Used Used%

-------------------------------- ---------- -----

Volume Data Footprint 5.03TB 36%

Footprint in Performance Tier 4.93TB 98%

Footprint in bucket_test 97.66GB 2%

Volume Guarantee 0B 0%

Flexible Volume Metadata 29.08GB 0%

Delayed Frees 12.39MB 0%

Total Footprint 5.06TB 36%

Here are some other commands that might be helpful:

::> storage aggregate object-store show-space

::> aggr show -fields composite

::> df -A -composite

::> storage aggregate show-space -aggregate-name <aggrname>

::> volume show -fields tiering-policy

::> set diag; run local waflcomposite stats show <aggr name | vol name>

You can also add the "-instance" flag at the end of most "show" commands to get more information.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

TTRAN,

Thanks for responding. I'm aware of how to pull it out of the CLI as you should have been able to see from my original post. I use NetAppDocs for internal reporting, and this data is not presently in NetAppDocs that I can find. I'm suggesting it would be helpful to the community to add this functionality, especially if they strip off and present in numbers based on GB. That give me an easy way to keep trending data for reporting, planning, and troubleshooting purposes.

I'd really like to see that in OCUM someday, but right now there are limited ways to capture data related to FabricPool and from a management perspective that makes it difficult to identify where data is coming form easily when somebody starts filling it up quickly and you need to ask them to put on the brakes. Hope that makes sense.

NetApp is coming up with some great stuff, but monitoring and reporting around those features is sorely lacking. I hope NetApp will step it up one of these days.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yep, I can definitely add that. I have been somewhat ignoring that data since I thought it was covered by another property (like in the VolumeSpaceDetails table). But, I don't have a system set up with a Fabric Pool so I haven't seen real data.

Would you want that in a separate table? Or, ideally, in an existing table? What would make the most sense when reviewing the data?

Jason

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jason,

Thanks for responding!

In FlexVolConfiguration if you could add tiering-policy and tiering-minimum-cooling-days that would be great.

volume show -volume <vol> -fields tiering-policy,tiering-minimum-cooling-days

It might make sense to add it to FlexVolSpaceDetails if you already parse the volume-footprint output, but if it is simpler to do a new sheet or to not mess up other things people are doing, another sheet is fine and I can reconcile if necessary to another sheet if I'm adding to a report.

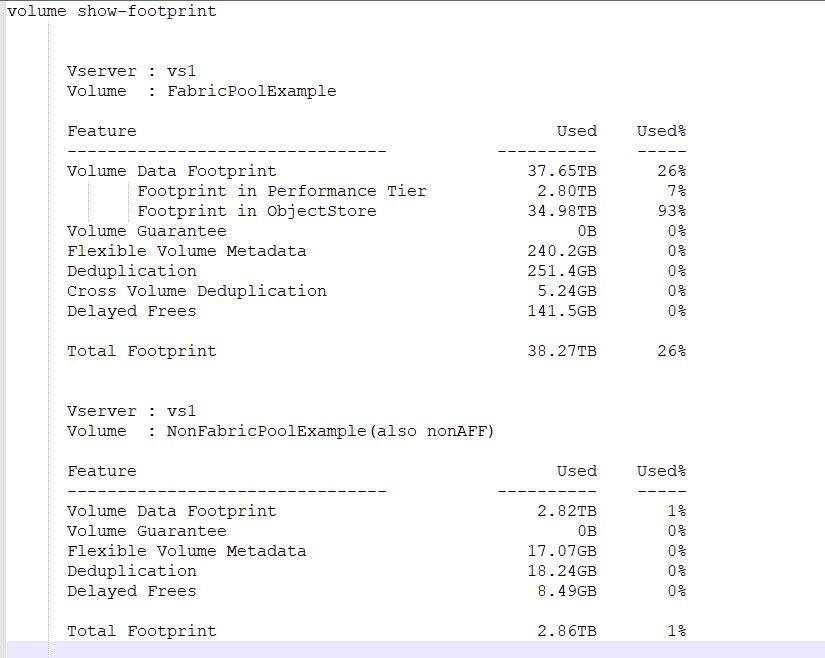

volume show-footprint is the command. You may already be reporting from this. I'll give you an example of how the output looks when it is FabricPool vs not:

Feel free to reach out and I'm happy to do a working session to allow you to get what you need or be a beta tester once you have it figured out.

Thanks again!

Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Mike,

So, I'm finally able to get to this. I have a couple of questions.

1. If the volume is not on a FabricPool I still see some footprint info (total, but nothing in bin0/bin1). Do you want to see that data or should I exclude any vols that don't have a bin0 and/or bin1 total?

2. The CLI returns a few more properties than ZAPI, most notably the 'Bin0 Name' and 'Bin1 Name' .. do we care about these? I only ask because pulling via ZAPI is more efficient that using the system-cli call (but, if we need the data then so be it).

3. And, of course, there are a bunch of other fields in there (DedupeMetaFilesFootprint, DelayedFreeFootprint, etc). Do we want those? Essentially all the other properties available via 'vol show-footprint -instance'? There is another set only available via CLI and not ZAPI: CrossVolumeDedupeMetafiles and CrossVolumeDedupeMetafilesTemporary (do we need these too)?

Just trying to determine how to capture the data. Also, I don't see this data anywhere in the ASUP output. Do you have a system I can look at in the ASUP DW that has a FabricPool configured and do you know if this data is present in ASUPs?

Thanks,

Jason

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jason,

#1. Only volumes that have been assigned a tiering policy will have a bin0 (AFF storage) and bin1 (object storage) and then "volume data footprint" should be the sum of those two.

If you are dedicating a page to volumes that are under fabric pool/tiering policy, then I'd exclude volumes that do not have bin0 and bin 1. That gives us a place to report and easily analyze individual bin 0 and bin 1 numbers as well as sum them for an aggregate or cluster. I hope that makes sense.

If you are adding this info to an existing page, just leave those blank or 0 if that is possible (if they have no bin0/bin1) but you could still report on volume data footprint, but imagine you report on this already.

#2. If you can validate that bin0 will always be the SSD aggregate and bin1 will always be the object store, you could label them:

bin0 = SSD

bin1 = Object Store

or generically:

bin0-name,bin1-name

this translates to the following output in the CLI which would provide the actual name of the SSD tier or Object Store tier:

cluster1::*> volume show-footprint -vserver svm1 -fields bin0-name,bin1-name

vserver volume bin0-name bin1-name

------------- --------- ---------------- -------------

aitmokcc-svm1 NAS_AUDIT Performance Tier fabricpool

#3. If you capture this info elsewhere, then no reason to duplicate....the primary goal of collecting this data is to be able to easily break down how much data is remaining on SSD and how much is moving to the object store. If we run it regularly, then we can see trending for individual volumes. Maybe someday they could capture this in OCUM...

For sure, the rest of the data has less value to me for my purpose.

I sent you info about our environment to your LinkedIn to keep it off the public internet.

Thanks for all your help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jason.

I'm hitting this again as I have a project where I'm looking at this data (bin0/bin1) and noticed this never made it into NetAppDocs. Any chance commands have been enhanced to make this easier to capture and include in a future release? I regularly run this against my environment to capture a snapshot and it would help to have this info included for trending purposes. Please let me know if I can help or assist in any way.

Thanks!

Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Mike,

I added this into NetAppDocs 4.0.0 for live data collections and I've just added the information for ASUP collected data (to be released in 4.1.0). I'm assuming you are pulling the data from live systems? If so, 4.0.0 is available on the Tools download site now (NetApp Support Site - All Tools - NetAppDocs). Please let me know if you have any issues with these new columns and also if you want any of the other related columns added (I didn't figure the others were that useful):

VolumeBlocksFootprint

VolumeBlocksFootprintPercent

VolumeBlocksFootprintBin0

VolumeBlocksFootprintBin0Percent

VolumeBlocksFootprintBin1

VolumeBlocksFootprintBin1Percent

TotalFootprint

TotalFootprintPercent

Thanks,

Jason

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jason,

Thanks for this. I don't have rights to get it myself these days so I'll ask my SE. I've been downfolding the hardware-ontap.xml update file from communities, but looks like that has been removed too.