ONTAP Discussions

- Home

- :

- ONTAP, AFF, and FAS

- :

- ONTAP Discussions

- :

- Ontap 9.x root-data-data partitioning discussion

ONTAP Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I've been testing some root-data-data partitioning setups with Ontap 9.1 and wanted to share my results with a few comments and also to get some feedback from the community.

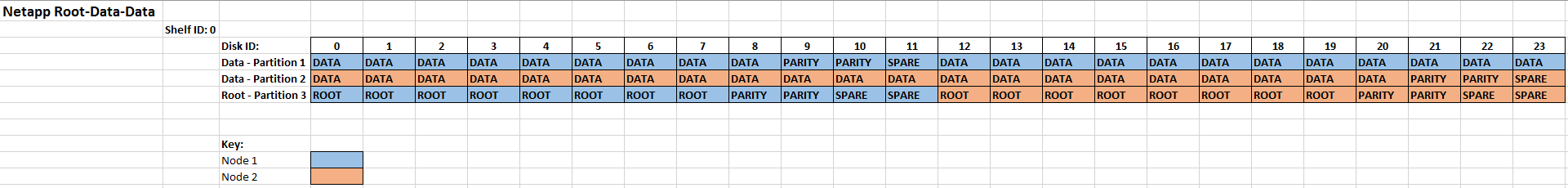

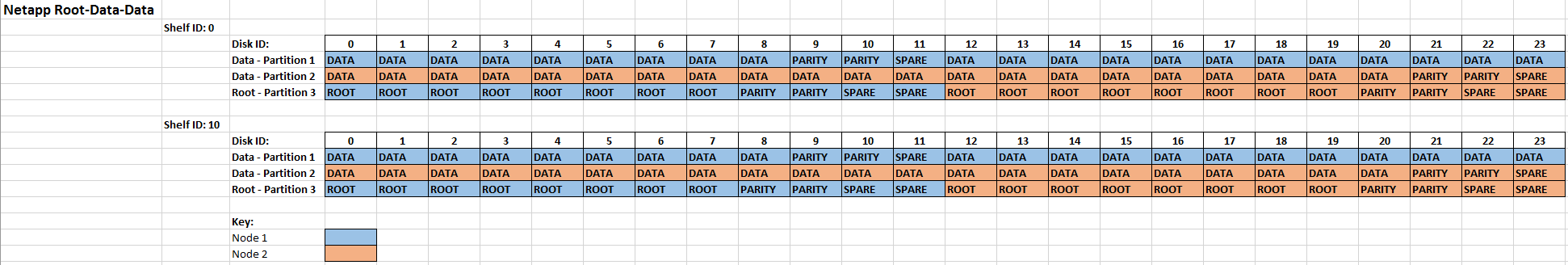

First test scenario - Full system initialization with 1 disk shelf (ID: 0)

The system splits the ownership of the drives and partitions evenly amongst the 2 nodes in the HA pair.

Between disks 0 - 11, partitions 1 and 3 are assigned to node 1 and partition 2 is assigned to node 2

Between disks 12 - 23, partitions 2 and 3 are assigned to node 2 and partition 1 is assigned to node 1.

Creating a new aggregate on Node 1 with Raid Group size 23 will give me:

- RG 0 - 21 x Data and 2 x Parity

- 1 x data spare

One root partition is roughly 55GB on a 3.8TB SSD

Benefits of this setup:

- root and data aggregates spread their load amongst both nodes

Cons:

- Single disk failure affects both node data aggregates.

It could possibly be better to re-assign partition ownership so that disk ID 0 - 11 are owned by node 1 and 12 - 23 are owned by node 2 ?

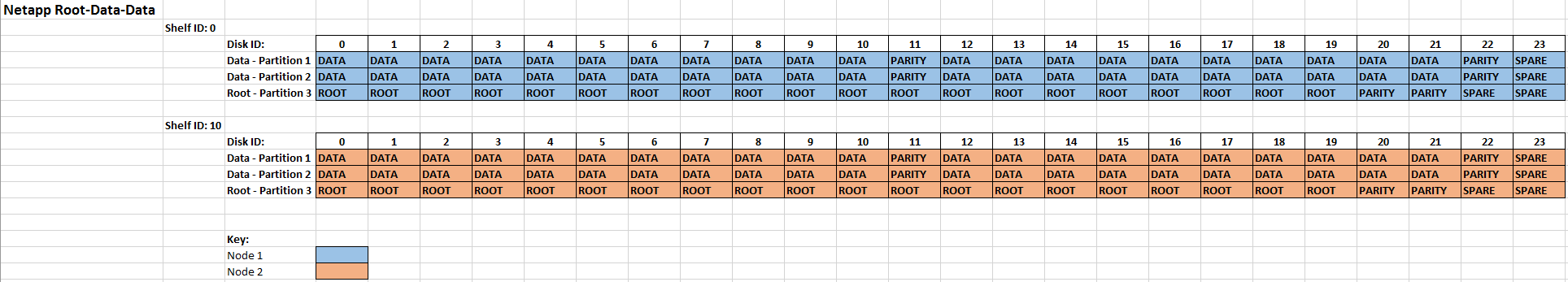

Second test scenario - Full system initialization with 2 disk shelves (ID: 0 and ID:10) - Example 1

The system splits the ownership of the drives between both shelves with the following assignments:

Node 1 owns all disks and partitions (0 - 23) in shelf 1

Node 2 owns all disks and partitions (0 - 23) in shelf 2

Creating a new aggregate on Node 1 with Raid Group size 23 will give me:

- RG 0 - 21 x Data and 2 x Parity

- RG 1 - 21 x Data and 2 x Parity

- 2 x data spares

One root partition is roughly 22GB on a 3.8TB SSD

The maximum amount of partitioned disks you can have in a system is 48, so with the 2 shelves, we are at maximum capacity for partitioned disks. For the next shelf, we will need to utilize the full disk size in new aggregates.

Benefits of this setup I see:

- in the case of 1 disk failure or a shelf failure, only 1 node/aggregate would be affected.

Cons of this setup:

- A single node root and data aggregate workload is pinned to 1 shelf

It's possible to reassign disks so that 1 partition is owned by the partner node which will allow you to split the aggregate workload between shelves, however in the case of a disk or shelf failure both aggregates would be affected.

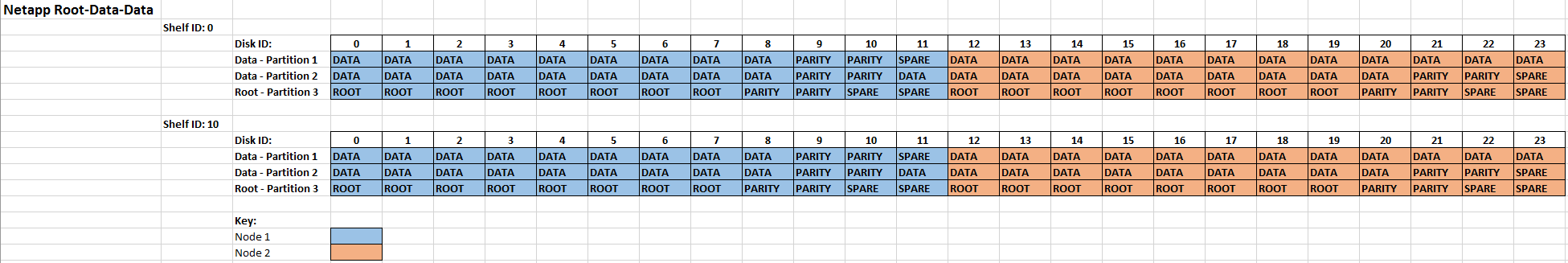

Third test scenario - Full system initialization with 2 disk shelves (ID: 0 and ID:10) - Example 2

In this example, I re-initialized the system with only 1 disk shelf connected.

Disk auto assignment was as follows:

- between shelf 1 disks 0 - 11, partition 1 and 3 are assigned to Node 1 and partition 2 is assigned to Node 2

- between shelf 1 disks 12 - 23, partition 2 and 3 are assigned to Node 2 and partition 1 is assigned to Node 1

I then completed the cluster setup wizard and connected the 2nd disk shelf.

The system split the disk ownership up for shelf 2 in the following way:

- Disks 0 - 11 owned by node 1

- Disks 12 - 23 owned by node 2

Next, I proceeded to add disks 0 - 11 to the node 1 root aggregate and disks 12 - 23 to the node 2 root aggregate. This partitioned the disks and assigned ownership of the partitions the same as shelf 1.

Because the system was initialized with only 1 shelf connected, it created the root partition size as 55GB as opposed to 22GB in my second test scenario above. What this means is that a 55GB root partition is used across the whole 2 shelves as opposed to 22GB. How much space do you actually save when using 3.8TB SSD's:

55GB x 42 (Data disks) = 2,310GB

22GB x 42 (Data disks) = 924GB

Difference = 1, 386GB or 40%

Benefits of this setup:

- Load distribution amongst shelf 1 and 2

Cons:

- Larger root partition

- single disk or shelf failure affects both aggregates

Fourth test scenario - Full system initialization with 2 disk shelves (ID: 0 and ID:10) - Example 3

Following on from my thrid test scenario, I re-assign the partitions so that partitions on disk id: 0 - 11 are owned by node 1 and 12 - 23 are owned by node 2

Benefits of this setup:

- 1 disk failure only affects 1 node root and data aggregate

- equal load distribution amongst the shelves.

Cons:

- Larger root partition

- 1 shelf failure will affect both nodes

Interested to hear feedback on the above setups, which ones do you prefer and why ?

Also feel free to add additional comments or setups that are not listed above.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If I'm going wiht ADP..

First scenario : Will be my choice, if i have plan to add more disk/shelf in future. So one/two Aggr with wired disk size.. remaining all same size.

Third scenario : WIll be my choice, if i'm sure, im not planning to add more disk/shelf to that aggr in next 2 ~ 3 years.

Shelf Failure : Yes its an important factor, in my 18+ yeard of experience.. never heard/experience a complete shelf failure on both module-path (im sure it happened to some)

Couple other points..

If SSD, dont add more than 2 shelf in a loop.

With Raid-TEC aggr reconstructing time is significantly less.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great Blog entry from @davidrnexon to start things off .. @robinpeter talk to us about "If SSD, dont add more than 2 shelf in a loop " - so if there is a fantasy huge budget 😉 and a AFF A700 tons of SSD shelves, can we configure 20 loops or more, and still have plenty of ports for FC and 10/40 GB nics ? thanks

- if i get my hands on a big AFF i want to use all the tools for the drives .. RAID-TEC, ADP, and Root-Data-Data

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@robinpeter we actually had a customer that had a chassis failure in their 2000 series last week. Took the whole storage down affecting 500+ staff. 5-6 hour turn around for parts and engineer to replace the chassis. I never heard of this before but unfortunately these really bad situations do happen 😞

@xiawiz I doubt you will fit 20 loops in any system, also you wouldn't run Raid-tec with SSD it's more for the larger SATA drives, in which case you would be looking at the 8200 series.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you so much for explaining this.

We recently purchased an AFF-A200 and we noticed that in the aggregate\disk information are, the usable size was half of the physical size. Absolutely no one has been able to explain this until now.

Great info.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi TimmyT, thats excellent. Glad you found this post useful.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good stuff.

As you were re-initializing the arrays, did you have to delete the partitions first, or just do a regular 4a?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

depends 🙂

Is this an existing system with ADP already ?

- If it has ADP and you wish to re-configure the disk layouts then everything will be removed, volumes, aggregates, partitions, etc

- If it has ADP and you just wish to re-initialize, meaning remove all volumes, aggregates and data, then you can just perform a re-initialize from the boot menu

- If you are not using ADP and you wish to do so, then you need to remove all volumes, aggregates and data, and remove ownership from all the drives.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

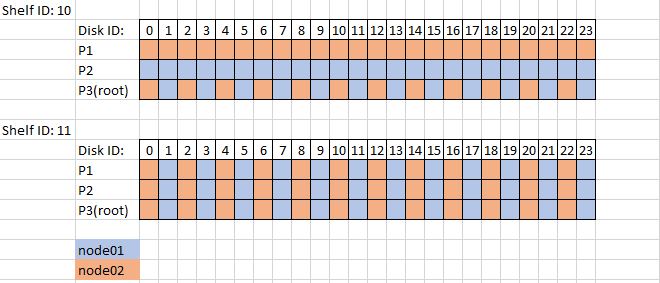

It is an A200 w/ 2 shelves (internal + 1 224) and ADP, but judging by the root partition size (55GB), it looks like it was configured first with a single shelf. Playing around on synergy, a 2 shelf config should have the 22GB root partition (like you got in scenario 2). This would be my preference. I kicked off a 4a and it did partition the 2nd shelf drives, but I'm thinking that because I did not remove all the existing partitions first, they ended up being 55GB. I'm going to follow this procedure and re-initialize

https://library.netapp.com/ecmdocs/ECMP1636022/html/GUID-CFB9643B-36DB-4F31-95D4-29EDE648807D.html

Here is how it assigned the partitions, which I thought was a little odd.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

yes that's correct, it will partition the new shelf but keep the root partition size the same, which in your case is 55GB.

If you want to get the partition size down, you'll need to remove everything - volumes, aggregates, partitions and disk ownership.

With the 2 shelves plugged in you can then re-initialize node 1 (keep node 2 in the boot loader until node 1 is finished), it will take half the disks, own them and partition them with the smaller root size. Then you can do the same with node 2.

Are you familiar with the process to remove everything and re-partition ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It should just be that procedure I linked, right?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, that link you posted is the correct procedure

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That worked well, root slice is 22GB, config of the 2 shelves matches expected from Synergy. However, I just added a 3rd shelf. My understanding was ADP will only partition 48 disks, but when I created a new aggregate with the new shelf, it partitioned those as well. I did not expect that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've read over this procedure several times but haven't been able to find the answer to this question yet: On an HA pair with ONTAP 9.2P1 installed, can this full wipe and re-initialize (remove and recreate all volumes and aggregates and reset disk ownership) be done remotely with only SSH access to the SPs?

Right now, we have a straight-from-the-factory 8200 HA cluster installed at a remote disaster recovery site about 1,500 miles away from where I'm currently sitting. It has high capacity SATA disks in it but was shipped with the first 3 physical disks of each shelf fully delegated to the root partitions. Ideally I'd like to re-initialize this cluster with root-data partitioning to give us back tens of terabytes of usable data space.

I can remotely access cluster management through HTTPS and SSH, each node separately through SSH, and each SP console separately through SSH. I expect the cluster management will go away during full wipe and re-initialize and I wouldn't be surprised if the individual node IP addresses would also get reset, but my hope is that I can maintain a constant SSH connection to the SP consoles and access the respective node's system consoles through the SPs - is this line of thinking correct?

To hedge any cautionary tales, there is literally nothing on this cluster yet other than the cluster root aggregates; no data aggregates, no SVMs, etc. And I have access to open a remote hands case with the colo data center in a worst case scenario if needed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You are correct, you will definitely lose cluster management and node management access during a wipe, but SP access will survive. I've only had this fail me once - I kicked off a wipe remotely and both nodes started spewing alerts about disk 0a.10.99 (100 disks in a shelf not an option on any Netapp shelf I'm aware of) and I had to go onsite and kick it.

I didn't realize R/D partitioning was supported on 8200s but it looks like starting w/ 9.2 it is. https://library.netapp.com/ecm/ecm_download_file/ECMLP2492508 p41.

Good luck.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi John, yes you can do it all remotely via the service processor. As Eric mentioned, the SP won't be reset to factory default.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Following up on my issue from before, per support, the 3rd shelf was partitioned because when I created the second aggregate I didn't explicitly specify the disks to use. There were two partitioned spares per controller still available, as well as 12 'whole' disks. In the GUI, I chose the disk type to be the latter, but for whatever reason, it chose to use the partitioned spares first, and in order to make the raid group the same disk type, it had to partition the whole disks. Weird I thought.

Easy fix though, blew away the aggregate, blew away the partitions, and created new aggrs via command line explicitly picking the 11 disks I wanted.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

one question I have is can you assign all data partitions to a single node and put them into a single aggregate? (ie to either have an active/passive node setup, or to just have all the adp disks on one node while other shelves are used on the other node)

This means that the single data aggregate would have 2 raidgroups: 23 x data1 partitions and 23 x data2 partitions.

Is this supported?

Cheers,

John

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jherlihy1 hi. fully supported.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

CDOT 9.3 and above - Does anyone want to summerize FAS system restrictions related to FLASH pools and the related SSD drives - ADP ? all possible RAID sizes and configs or restrictions - etc. THANKS ![]() - this page under NetApp support / docs looks very promising for options. https://library.netapp.com/ecmdocs/ECMP1636022/html/GUID-8284CB30-5BC5-4D10-B0AA-AA8F8DAA752E.html

- this page under NetApp support / docs looks very promising for options. https://library.netapp.com/ecmdocs/ECMP1636022/html/GUID-8284CB30-5BC5-4D10-B0AA-AA8F8DAA752E.html

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @xiawiz

you have lot's of options available. and its a bit tricky to find the most fit one performance, current size, growth. flexibility and maybe resiliency now that 3 parity raidgroup available.

i summarized something here with reference's that you can maybe use

Gidi