VMware Solutions Discussions

- Home

- :

- Virtualization Environments

- :

- VMware Solutions Discussions

- :

- fas 2240 limited switch connections question

VMware Solutions Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi all,

i have a dilema about following situation:

head1-fas2240-e1a(10GbE)-->switch1-10GbEport

head2-fas2240-e1a(10GbE)-->switch2-10GbEport

So i have only one link between first controller and first switch and one between second controller and switch. Both switches are stacked. The question is, when one of the switches or controllers fails, is left controller going to take over ip of the failed one? If yes, how long would it take to take over and how would it affect my ongoing iSCSI conncections (there will be 2 vsphere hosts connected to both switches with 2 nics dedicated to iSCSI using MPIO).

Thanks in advance for answer!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

The 10GbE cards aren't dual port?

By the way, if you need a takeover during an interface failure event you should set up this options on both controllers:

options cf.takeover.on_network_interface_failure on

options cf.takeover.on_network_interface_failure.policy all_nics

... and you should enable nfo at all interfaces:

ifconfig <interface_name> nfo

Don't forget to add the line above at /etc/rc file to have this changes persistent across reboot.

See you,

Nascimento

NetApp - Enjoy it!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

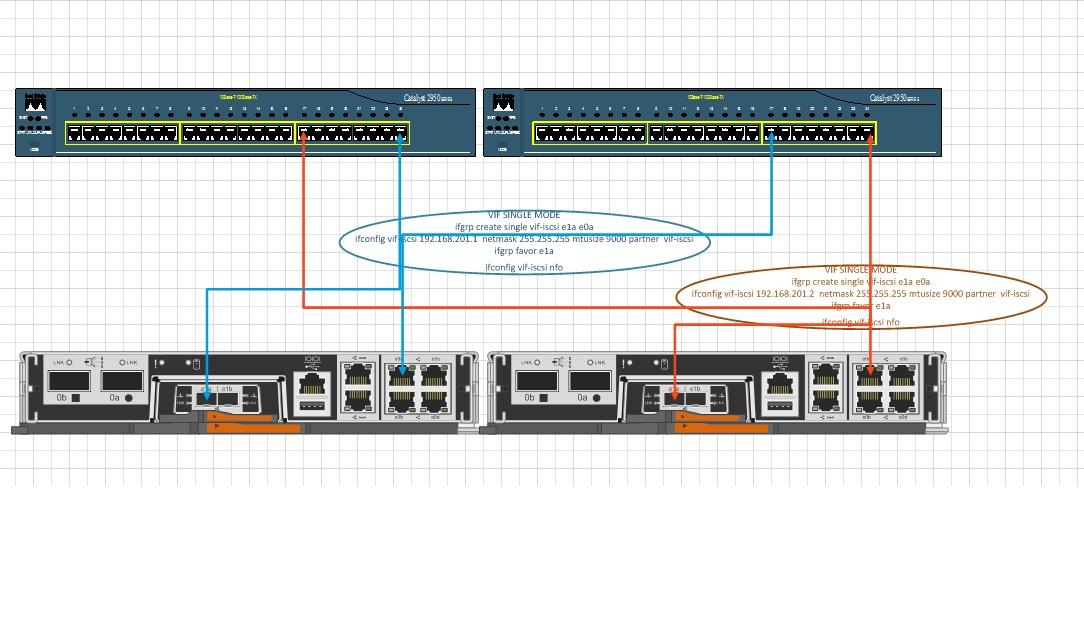

Good idea.. NFO is a good use case if you want to handle switch failure. With NFO it takes 60 seconds to failover with the link failure and a little more time for the failover to occur...for controller failover it is fast if a failure most of the time within 60 seconds or less (even 30 seconds or less)...there are no published numbers I know of on failover times but they occur within the timeout window for iSCSI, FCP and NFS. Make sure to put the "partner" parameter on each ifconfig line with the name of the partner interface on the other controller for failover so the ip moves over.

The mezzanine card option for the 2240 is a dual port SFP+ nic with ports 1a and 1b. If you have the ports I would setup at least a single mode ifgrp so that a port failure can failover intra-controller before having to failover to the other controller. You mentioned MPIO...with single ports on the netapp... are you going with 2 nics on vSphere and 1 on netapp? You could go 2 on each and have 2 separate mpio paths (in that case wouldn't require an ifgrp if the host does the mpio with resiliency of more links).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thnks both of You,

Yes mezzanine card is a dual port , but I only have one 10GbE port on each switch. After suggestion maybe its a better idea to create single mode vif consisting of 1x10Gb and one 1x1Gb with a favorite 10GbE (there are 4GbE on each controller and I have many free port on the switches). I attached configuration , if I missed something here give me a tip.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you don't need your 1 GbE ports for anything else, I would put the 4x 1 GbE links in a multi mode. Then create a second level using this channel and the 10 GbE link. This gives you potentially more bandwidth, especially when you have a lot of clients connecting to the filer, in case of the 10 GbE link failure.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a similiar setup but with 2 10GB ports on each switch. The switches are HP Procurve 2910 al. I am looking for some suggestions on making the best use of these connections for maximum bandwidth utilization and failover support.