VMware Solutions Discussions

- Home

- :

- Virtualization Environments

- :

- VMware Solutions Discussions

- :

- Question about SMVI and VMDK located on alternate NFS datastore

VMware Solutions Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Question about SMVI and VMDK located on alternate NFS datastore

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Per technical report TR-3749 page 78, it specified the following:

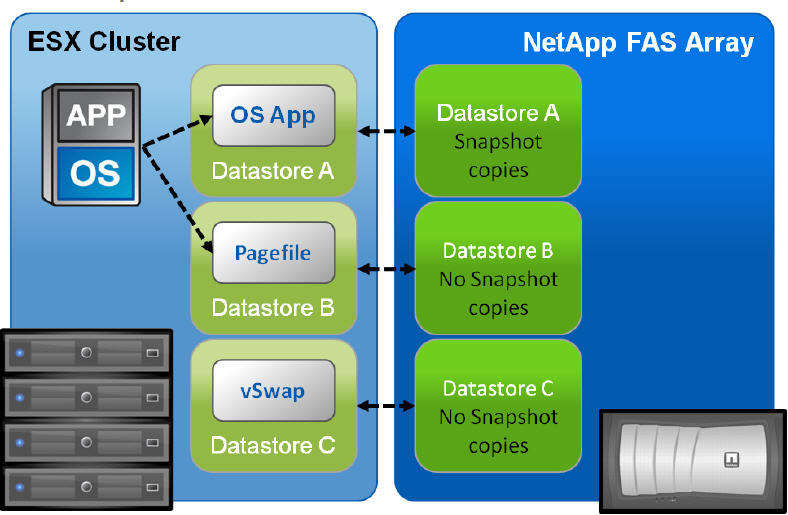

OPTIONAL LAYOUT: LOCATE VM SWAP/PAGEFILE ON A SECOND DATASTORE

Each VM creates a swap or pagefile that is typically 1.5 to 2 times the size of the amount of memory configured for each VM. Because this data is transient in nature, we can save a fair amount of storage and/or bandwidth capacity by removing this data from the datastore, which contains the production data. To accomplish this design, the swap or pagefile of the VM must be relocated to a second virtual disk stored in a separate datastore on a separate NetApp volume. Figure 70 shows a high-level conceptual view of this layout.

In the above scenario, it appears you can attach a VMDK on another datastore without accumulating snapshots against it (Datastore B). I'm assuming the report is talking about SMVI verses native NetApp snapshots since it references this througout the article. This is my issue.

We have a VM that is located on a production datastore. We have nightly backups scheduled via VSC (SMVI) against this datastore and the VMs in it. We noticed larger than usual snapshots and figured out it was a "spool" folder for a HA product we use one one of the VMs. This folder has dynamic data that can inflate our snapshots by as much as 40-50GB's daily. So with the above in mind, we created a new datatsore and created a new VMDK for this VM on this datastore. At this point, this datatsore isn't being backed up per VSC. Problem is we are seeing nightly snapshots against the new datastore. This would basically be the same scenario as above with the exception that we are not targeting the pagefile, but instead data.

Am I wrong to assume this shouldn't happen?

Solved! See The Solution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually it will. The default behavior of VSC is to backup (generate a NetApp snapshot) for each datastore of a VM. So if the VM is in Datastore A and you have a VSC job scheduled for Datastore but the VM also has a VMDK on Datastore B, both will get snapped.

However if you edit the job, click on spanned entities and deselect the datastore you don't want snapped. Then you are good to go.

One unfortunate thing is you will have to do everytime you deploy a VM in this fashion...

Keith

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually it will. The default behavior of VSC is to backup (generate a NetApp snapshot) for each datastore of a VM. So if the VM is in Datastore A and you have a VSC job scheduled for Datastore but the VM also has a VMDK on Datastore B, both will get snapped.

However if you edit the job, click on spanned entities and deselect the datastore you don't want snapped. Then you are good to go.

One unfortunate thing is you will have to do everytime you deploy a VM in this fashion...

Keith

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks!! That should do the trick!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content