VMware Solutions Discussions

- Home

- :

- Virtualization Environments

- :

- VMware Solutions Discussions

- :

- VMware NFS HA & multipathing without LACP

VMware Solutions Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

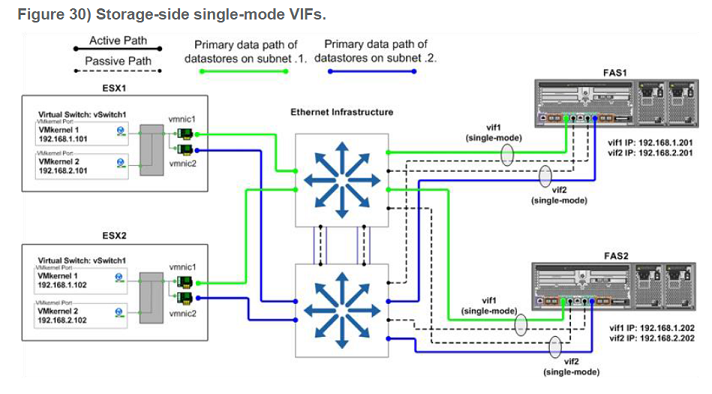

In a LAN environment without cross-stack LACP (EtherChannel) functionality - can NFS load-balancing potentially use two stand-alone ports on each NetApp controller on two different subnets?

I found this in TR-3749:

*If* we remove vifs on the NetApp side, so only 2x stand-alone port is used per each controller - what happens on the ESX host when, let’s say, “green” port on FAS1 goes down – business as usual, “blue” path is used and data store doesn’t lose connectivity? Or does it??

Thanks,

Radek

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No. NFS is associated with IP address. If this address is unavailable, you cannot access NFS server. The only rather rude workaround is to failover when port lost connectivity (NFO).

Or switch to cDOT which implements transparent IP address failover between multiple physical ports

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Andrey,

Many thanks for your response.

So two things which still puzzle me:

- if we ignore NetApp side for a moment, on that picture above there would be no link redundancy between ESX host and switches? (just two NICs on two different subnets)

- this joint post about NFS from Vaughan Steward and Chad Sakac seems to suggest vmkernel routing table may deliver fail-over capability between multiple NFS subnets:

Further thoughts?

Thanks,

Radek

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I do not see anything about failover in this post, sorry. What they say - you may need to explicitly configure route to data store, to overcome single default route. As long as you use single interface per link, this configuration is not high-available when considering single ESXi server. But nothing prevents you from adding more interfaces to each subnet and pool them.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- if we ignore NetApp side for a moment, on that picture above there would be no link redundancy between ESX host and switches? (just two NICs on two different subnets)

Well, I can't speak for TR author, but picture is titled "Storage side ..." so ESX side should not be considered as authoritative guideline. Also, different logical subnets do not yet imply different physical broadcast domains (VLANs).

I view it more as conceptual outline. But I agree that it makes things confusing. You have access to fieldportal, right? Go to TR and submit comment ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you setup the NFS mount/datastore using hostnames & each subnet had access to a DNS server that could resolve the hostname to the IP then yes that should work. I would test that setup in a lab environment first & once you have it working then take a maintenance window to implement it on your production systems.

Regards,

Nicholas Lee Fagan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Saluting Nicholas,

Like your brief response.

Would you please also advice the advantage of use NFS vs SAN for EEX on filer?

Thanks in advance + Happy Sunday

Henry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is always the big debate. NFS, CIFS, iSCSI, or FCP. Most people would say setup a LUN & use FCP for best performance. I say go with what you feel most comfortable/confident setting up & managing.

Regards,

Nicholas Lee Fagan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It depends on your objective. It is easier to build redundant failure tolerant and load balanced connection to storage using SAN than NFS. OTOH NFS is easier to integrate with NetApp features (you have one layer less).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

DNS is evaluated during mount request only, so it does not help when connectivity to data store is lost. Even if ESX can transparently remount, it still means anything running off this data store had crashed. Not to mention that DNS server has no idea of interface connectivity on ESX, so it can return the same non-working address.

DNS is for load balancing, not for failover.