VMware Solutions Discussions

- Home

- :

- Virtualization Environments

- :

- VMware Solutions Discussions

- :

- Re: VMware out of space with thick provisioning

VMware Solutions Discussions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi to all,

we have a weird problem with one virtual machine and netapp.

This windows virtual machine have a 2TB disk mounted on it, this disk has 300Gb of data, 1,7TB it's free.

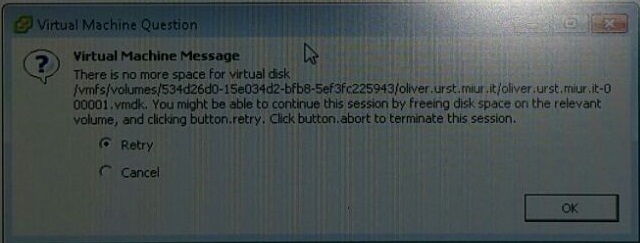

Suddenly this vm machine stop to responding and VMware notify me that "there is no more space for virtual disk".

This 2TB disk is created on VMware using thick provisioning, the LUN is created with thick provisioning too also on NETAPP.

No snapshat created on vmware and netapp.

Do you know why this problem is occured? Remember that I'm netapp newbie.

Thank you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you provide more information? Data ONTAP version (and whether you're using 7-mode or cDOT), vSphere version, storage protocol, lazy zeroed or eager zeroed thick VMDK. Can you provide a screen shot of the error?

The only time I've seen something similar is when the LUN is thin provisioned and the volume runs out of space, usually because of a snapshot + fractional reserve.

Have you opened a support case?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here it is

Model: FAS2240-2

NetApp Release 8.1.3 7-Mode

VSphere Version: 5.5.0

Storage protocol: FC thick provisioning eager zeroed VMDK

This is the vmware error, sorry for the quality

No support case still open

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The "-000001.vmdk" on the end denotes that it's a VMware snapshot. Does the datastore have free space as reported by VMware?

The original VMDK will be thick provisoned, but the snapshot will be thin provisioned and can consume up to the same amount of space as the original VMDK. If you have deduplication turned on the NetApp volume it will still show empty space (from the perspective of the NetApp) because it will dedupe all the extra zeros in the EZT VMDK, but vSphere can't interpret that freed/returned space.

Andrew

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

First of all, thank you for quickly answer.

I'll try to describe better our environment.

This Windows Virtual machine has two disk on two differents datastore, both on SAS Netapp, but on two different LUN.

The first disk with OS (80GB) is on Lun 1, the second disk (2TB) with exchange storage is on Lun 2.

This Virtual Machine has two Acronis backup job, thie first one backup the OS disk, and the second one backup the storage disk, both jobs create a snapshot during the backup.

When the error occured I've moved the exchange storage disk on SATA Netapp storage (thin provisioning) and now can't reproduce the issue, but the problem is occured to the storage disk.

I'm sure because on the Lun 1 there are many VM, Lun 2 instead was dedicated to the exchange storage, and only this VM stop to responding showing me this error.

When the error's happened I've opened the vm snapshot manager, without finding any snapshot, and I was unable to expand the LUN2 because I'don't have any more space.

Answering to your question, on Netapp the datastore LUN2 it's 100'% used, on VMware the datastore with the LUN 2 was 100% used.

Your post explain a lot of thing, but for me something it's not clear at all especially because I will have to move back the storage on SAS with only 2TB free on Netapp so I'm a bit worried that error can happen again.

I would like to know if it's better to create thin or thick provisioning on Netapp and the same on Vmware and if it's better that I reserve some empty space.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thick or thin provisioning is purely a matter of preference. Each has it's own positives and negatives:

- Some positives for thin provisioning - VMs rarely, if ever, actually consume all of the HDD space assigned to them. This means that thick VMDKs are actually a large amount of empty space which, if thin povisioned, could be used by other VMDKs. This means you can fit more VMs into the same amount of space on your datastore(s).

- Some negatives for thin provisioning - Overcommitment can be an issue for two reasons: 1) you have a higher density of VMs in the same datastore which means more IOPS demands, and 2) if the datastore runs out of space all of the VMs are affected.

- Some positives for thick provisioning - No capacity concerns...what you see is what you have. Previously (before VAAI) there were performance concerns when using VMDKs that are not EZT, however that has been mostly resolved.

- Some negatives for thick provisioning - Lots of wasted capacity.

Usually I tell people that the choice comes down to one thing: monitoring. If you have the monitoring in place, and the ability to react to it, then thin provisioning is not an issue. When the datastore hits your predetermined threshold you can begin to migrate VMs out of it using SvMotion.

If you are out of storage capacity, then you must account for the length of time it takes to procure more storage and add it to the system. This was a significant issue for some organizations I've worked with...they are only able to purchase equipment once a year, so their stance was to thick provision everything and when they were out of capacity then they stopped giving out LUNs. This meant that everyone always got the capacity they were promised and they didn't have to worry about if/when they run out of capacity it affecting their users, but their storage efficiency was very, very poor.

You should always reserve some free space in your datastores. I think VMware's recommendation is 20%. This allows snapshots to be taken without significant fear of running out of space. Again, this comes down to monitoring and how quickly you can react. If the datastore has 10% free space and is filling up at a rate of 1% every 5 minutes due to a snapshot, then you must have the personnel in place to respond within 50 minutes at any time.

TR-4333 has some great information on thin provisioning in VMware environments starting on page 140, you might want to give that a read if you have a moment.

Hope that helps!

Andrew

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your help!